- 01. Introduction

- 02. Performance Consistency

- 03. AnandTech Storage Bench - The Destroyer

- 04. AnandTech Storage Bench - Heavy

- 05. AnandTech Storage Bench - Light

- 06. Random Performance

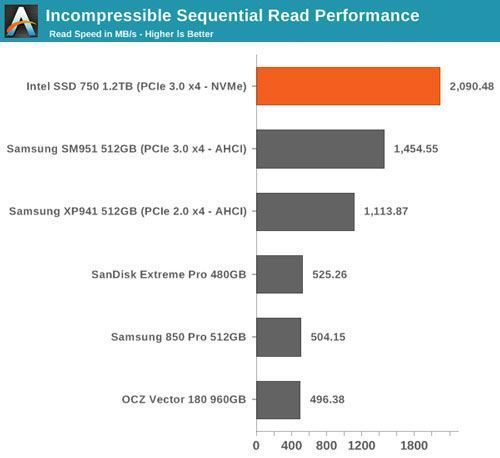

- 07. Sequential Performance

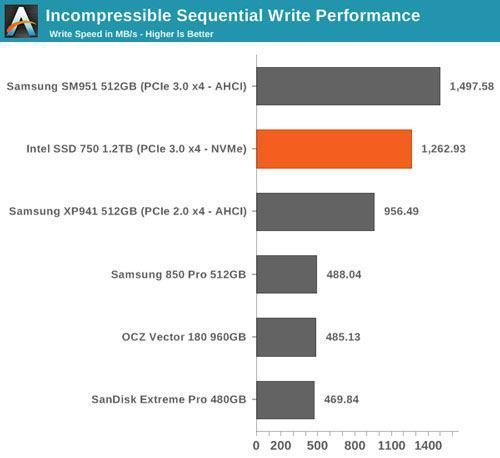

- 08. Mixed Read/Write Performance

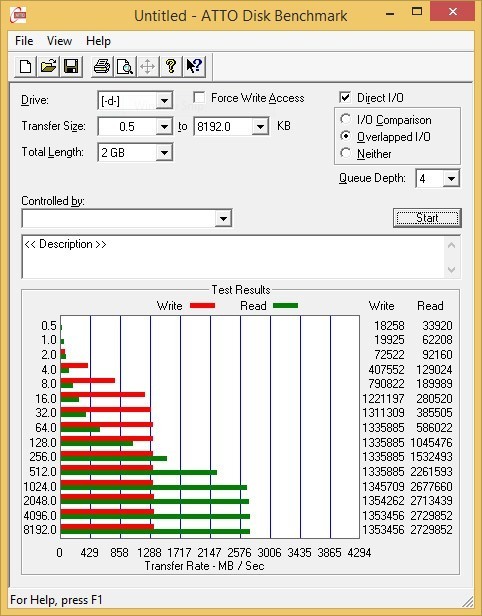

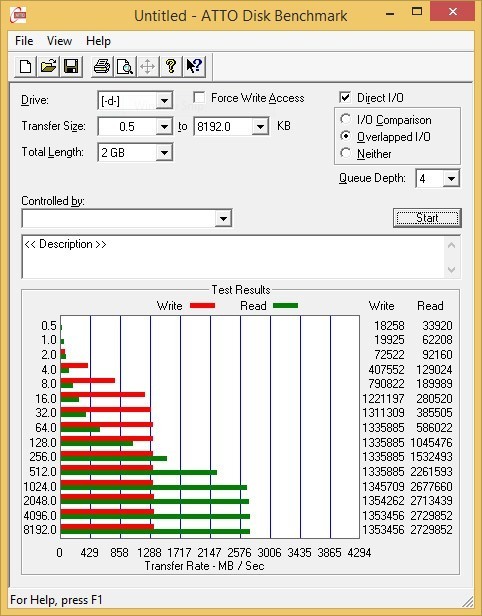

- 09. ATTO - Transfer Size vs Performance

- 10. Final Words

Intel PCIe SSD 750 Review: NVMe for the Client

I don't think it's an overstatement to say that Intel introduced us to the era of modern SSDs back in 2008 with the X25-M. It wasn't the first SSD on the market, but it was the first drive that delivered the aspects we now take for granted: high, consistent and reliable performance. Many SSDs in the early days focused solely on sequential performance as that was a common performance metric for hard drives, but Intel understood that the key to better user performance wasn't the maximum throughput, but the small random IOs that take unbearably long to complete on HDDs. Thanks to Intel's early understanding of real world workloads and implementing the knowledge to a well designed product, it took several years before others were able to fully catch up with the X25-M.

But when the time came to upgrade to SATA 6Gbps, Intel missed the train. The initial SATA 6Gbps drives had to rely on third party silicon because Intel's own SATA 6Gbps controller was still in development, and to put it frankly the SSD 510 and SSD 520 just didn't pack the same punch as the X25-M did. The others had also done their homework and gone back to the drawing board, which meant that Intel was no longer in the special position it was in 2008. Once the SSD DC S3700 with in-house Intel SATA 6Gbps controller finally materialized in late 2012, it quickly built back the Intel image that the company had in the X25-M days. The DC S3700 wasn't as revolutionary as the X25-M was, but it again focused on areas where other manufacturers had been lacking, namely performance consistency.

While Intel was arguably late to the SATA 6Gbps game, the company already had something much bigger in mind. Something that would abandon the bottlenecks of SATA interface and be the first to challenge the X25-M in the history of SSDs. That product was the SSD DC P3700, the world's first drive with custom PCIe NVMe controller and the first NVMe drive that was widely available.

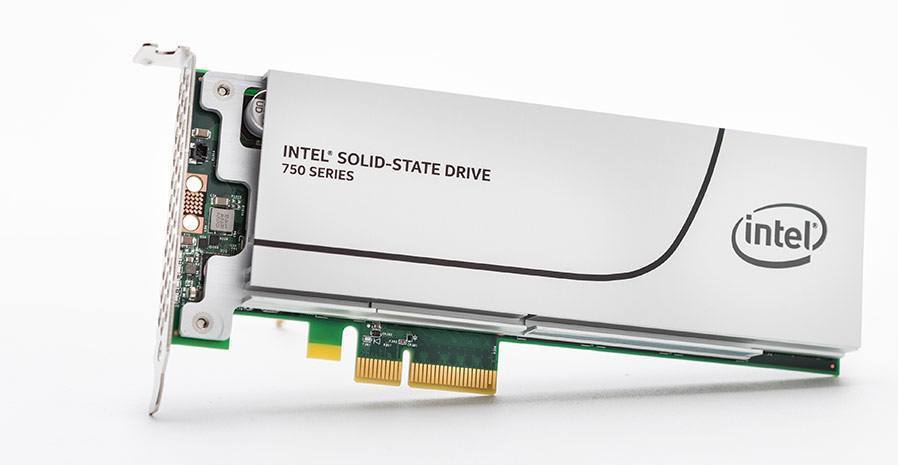

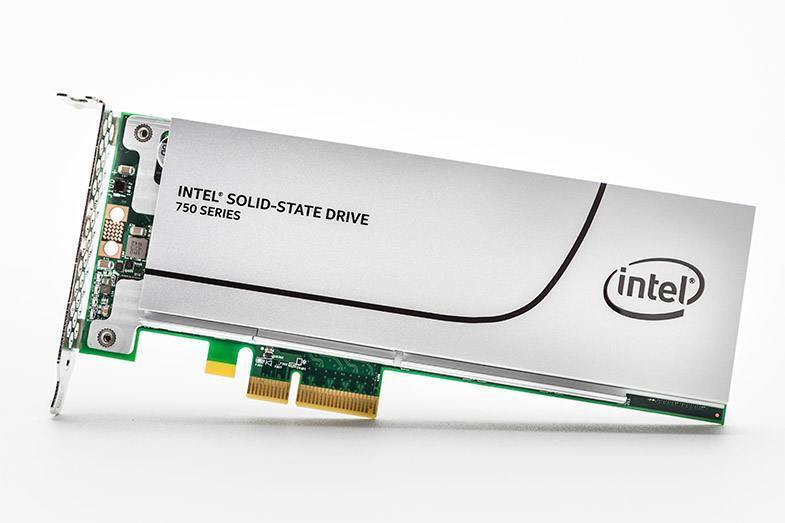

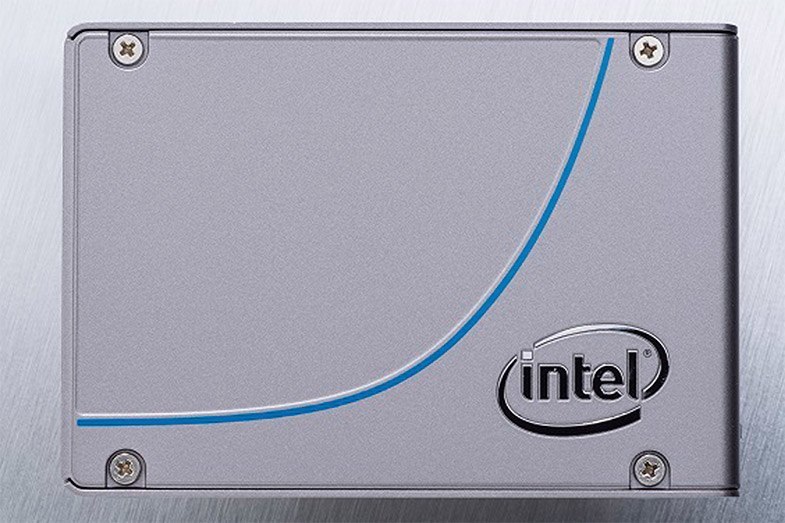

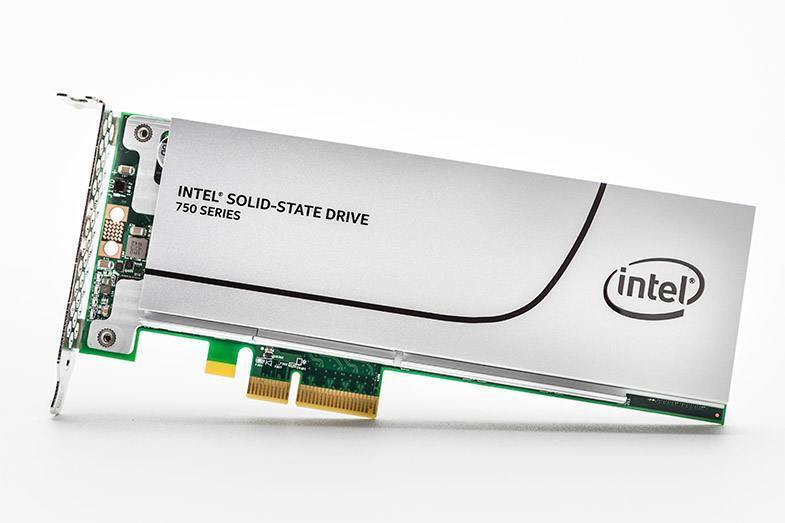

Ever since our SSD DC P3700 review, there's been massive interest from enthusiasts and professionals for a more client-oriented product based on the same platform. With eMLC, ten drive writes per day endurance and a full enterprise-class feature set, the SSD DC P3700 was simply out of reach for consumers at $3 per gigabyte because the smallest 400GB SKU cost the same as a decent high power PC build. Intel didn't ignore your prayers and wishes and with today's release of the SSD 750 Intel is delivering what many of you have been craving for months: NVMe with a consumer friendly price tag in a 2.5" form factor via SFF-8639 or a PCIe add-in card.

Even though the SSD 750 is built upon the SSD DC P3700 platform, it's a completely different product. Intel spent a lot of time on redesigning the firmware to be more suitable for client applications, which differ greatly from typical enterprise workloads. The SSD 750 is supposed to be more focused on random performance as the majority of IOs in client workloads tend to have random patterns and are small in size. The sequential write speeds may seem a bit low for such high capacities for that reason, but ultimately Intel's goal was to provide better real world performance rather than focus on maximum benchmark numbers, which has been Intel's strategy ever since the X25-M days.

At the time of launch, the SSD 750 will only be available in capacities of 400GB and 1.2TB. An 800GB SKU is being considered, but I think Intel is still testing the waters with the SSD 750 and thus the initial lineup is limited to just two SKUs. After all, the ultra high-end is a niche market and even in that space the SSD 750 is much more expensive than existing SATA drives, so a gradual rollout makes a lot of sense. I think for enthusiasts the 400GB model is the sweet spot because it provides enough capacity for the OS and applications/games, whereas professionals will likely want to spring for the 1.2TB if they are looking for high-speed storage for work files (video editing is a prime example).

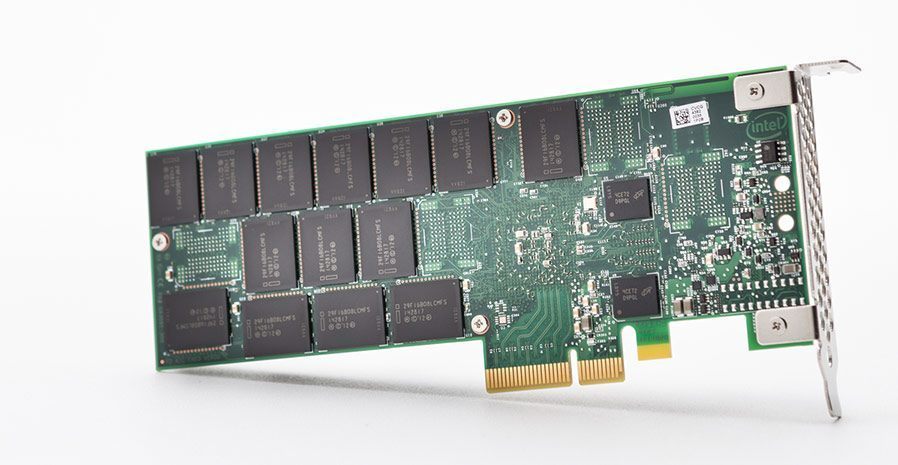

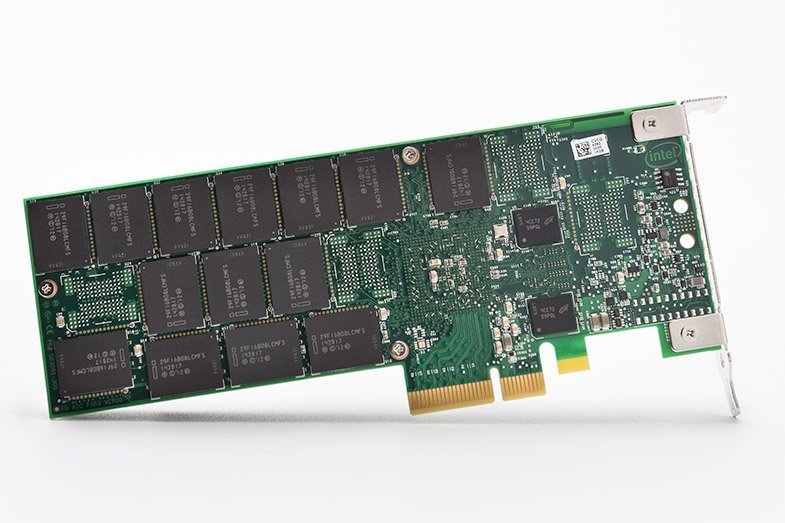

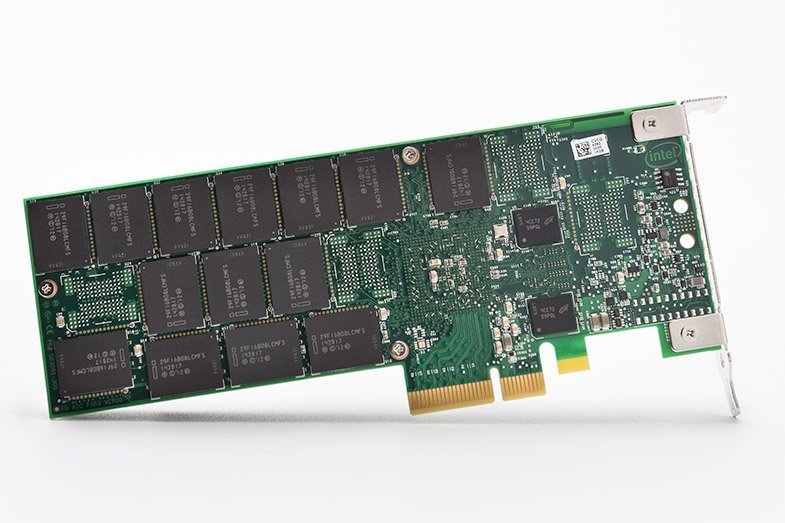

The SSD 750 utilizes Intel-Micron's 20nm 128Gbit MLC NAND. The die configuration is actually fairly interesting because the packages on the front-side on the PCB (i.e. the one that's covered by the heat sink and where the controller is) are quad-die with 64GiB capacity (4x128Gbit), whereas the packages on the back-side of the PCB are all single-die. I suspect Intel did this for heat reasons because PCIe is more capable of utilizing NAND to its full potential, which increases the heat output and obviously four dies inside one package generate more heat than a single die. With 18 packages on the front-side and 14 on the backside, the raw NAND capacity comes in at 1,376GiB, resulting in effective over-provisioning of 18.8% with 1,200GB of usable capacity.

The controller is the same 18-channel behemoth running at 400MHz that is found inside the SSD DC P3700. Nearly all client-grade controllers today are 8-channel designs, so with over twice the number of channels Intel has a clear NAND bandwidth advantage over the more client-oriented designs. That said, the controller is also much more power hungry and the 1.2TB SSD 750 consumes over 20W under load, so you won't be seeing an M.2 variant with this controller.

Similar to the SSD DC P3700, the SSD 750 features full power loss protection that protects all data in the DRAM, including user data in flight. I'm happy to see that Intel understands how power loss protection can be a critical feature for the high-end client segment as well especially because professional users can't have the risk of losing any data.

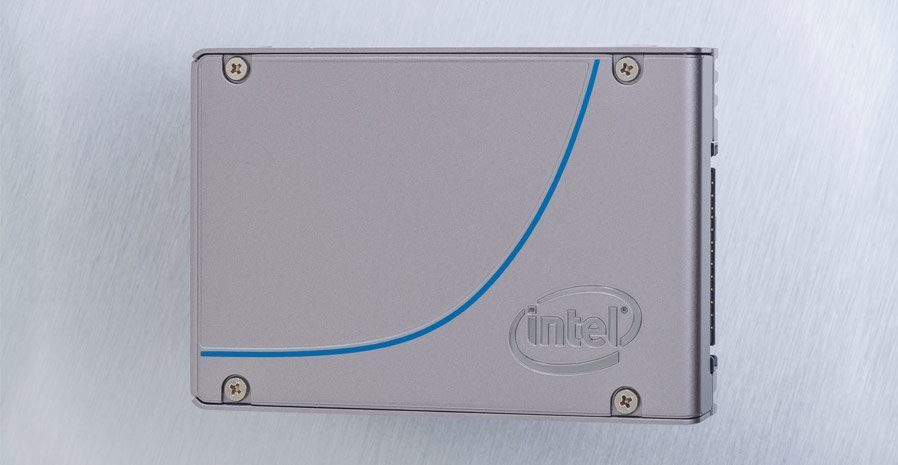

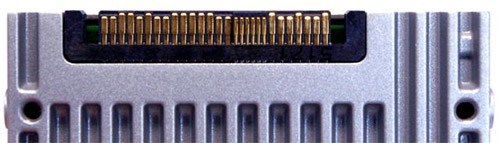

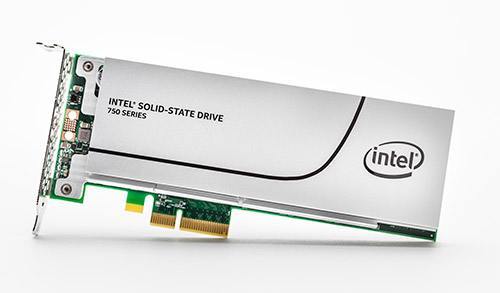

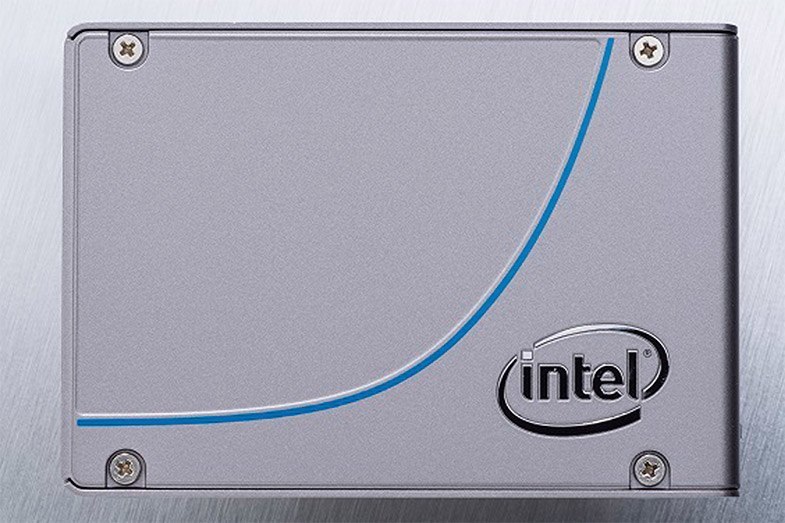

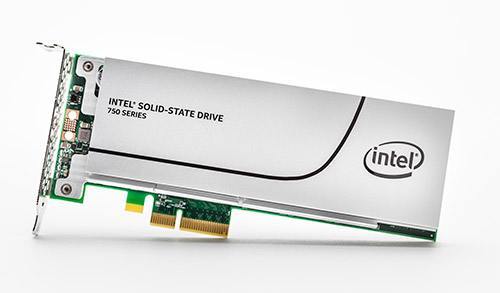

The Form Factors & SFF-8639 Connector

The SSD 750 is available in two form factors: a traditional half-height, half-length add-in card and 2.5" 15mm drive. The 2.5" form factor utilizes an SFF-8639 connector that is mostly used in the enterprise, but it's slowly making its way to the high-end client side as well (ASUS just announced TUF Sabertooth X99 two weeks ago at CeBit). The SFF-8639 is essentially SATA Express on steroids and offers four lanes of PCIe connectivity for up to 4GB/s of bandwidth with PCIe 3.0 (realistically the maximum bandwith is about 3.2GB/s due to PCIe inefficiency). Honestly, aside from the awkward name, SFF-8639 is what SATA Express should have been from the beginning because nearly all upcoming PCIe controller designs will feature four PCIe lanes, which renders SATA Express useless as there's no point in handicapping a drive with an interface that's only capable of providing half of the available bandwidth. That said, I wasn't at the table when SATA-IO made the decision, but it's clear that the spec wasn't fully thought through.

Similar to SATA Express, SFF-8639 has a separate SATA power input in the cable. That's admittedly quite unwieldy, but it's necessary to keep the motherboard and cable costs reasonable. The SSD 750 requires both 3.3V and 12V rails for power, so if the drive was to draw power from PCIe it would have required some additional components on the motherboard side, which is something that the motherboard OEMs are hesitant about due to the added cost, especially since it's just one port that may not even be used by the end-user.

As the industry moves forward and PCIe becomes more common, I think we'll see SFF-8639 being adopted more widely. The 2.5" form factor is really the best for a desktop system because the drive location is not fixed to one spot on the motherboard or in the case. While M.2 and add-in cards provide a cleaner look thanks to the lack of cables, they both eat precious motherboard area that could be used for something else. That's the reason why motherboards don't usually have more than one M.2 slot as the area taken by the slot can't really be used for any other components. Another issue especially with add-in cards is the heat coming from other PCIe cards (namely high power GPUs) that can potentially throttle the drive, whereas drive bays tend to be located in the front of the case with good airflow and no heat coming from surrounding components.

Utilizing the Full Potential of NVMe

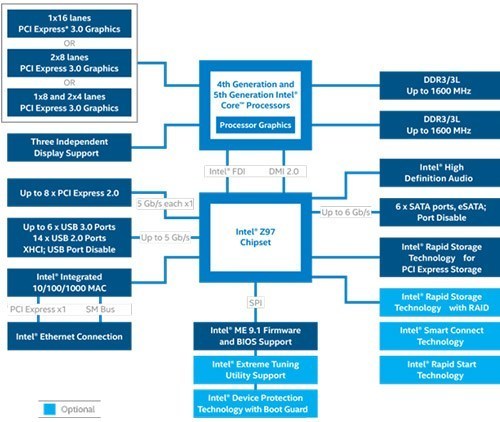

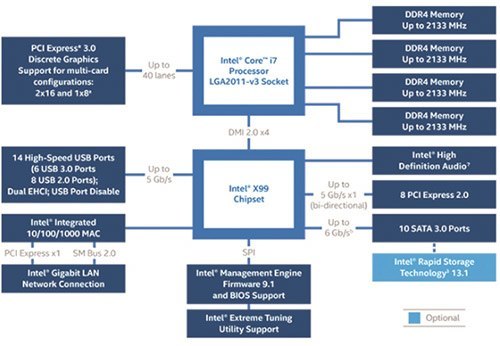

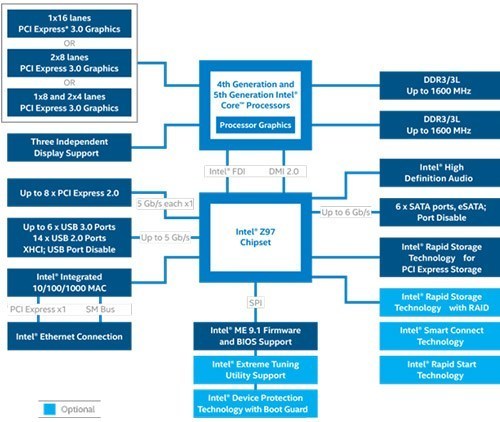

Because the SSD 750 is a PCIe 3.0 design, it must be connected directly to the CPU's PCIe 3.0 lanes for maximum throughput. All the chipsets in Intel's current lineup are of the slower PCIe 2.0 flavor, which would effectively cut the maximum throughput to half of what the SSD 750 is capable of. The even bigger issue is that the DMI 2.0 interface that connects the platform controller hub (PCH) to the CPU is only four lanes wide (i.e. up to 2GB/s), so if you connect the SSD 750 to the PCH's PCIe lanes and access other devices connected to the PCB (e.g. USB, SATA or LAN) at the same time the performance would be even further handicapped.

Intel Z97 chipset block diagram

Utilizing the CPU's PCIe lanes presents some possible bottlenecks for Z97 chipset users because the normal Haswell CPUs feature only sixteen PCIe 3.0 lanes. In other words, if you wish to use the SSD 750 with a Z97 chipset you have to give up some GPU PCIe bandwidth because the SSD 750 will take four lanes out of the sixteen. With a single GPU setup that's hardly an issue, but with SLI/CrossFire setup there's a possibility of some bandwidth handicapping if the GPUs and SSD are utilizing the interface simultaneously. Also, with NVIDIA's PCIe x8 requirement, it limits itself to a single NVIDIA card implementation. Fortunately it's quite rare that an application would tax the GPUs and storage at the same time since games tend to load data to RAM for faster access and especially with the help of PCIe switches it's possible to grant all devices the lanes they require (although the maximum bandwidth isn't increased, but switches allow full x16 bandwidth to the GPUs when they need it).

With Haswell-E and its 40 PCIe 3.0 lanes, there are obviously no issues with bandwidth even with an SLI/CrossFire setup and two SSD 750s. Unfortunately the X99 (or any other chipset) doesn't support PCIe RAID, so if you were to put two SSD 750s in RAID 0 the only option would be to use software RAID. That in turn will render the volume unbootable and I had some performance issues with two Samsung XP941s in software RAID, so at this point I would advise against RAIDing the SSD 750s. We'll have to wait for Intel's next generation chipsets to get proper RAID support for PCIe SSDs.

As for older chipsets, Intel isn't guaranteeing compatibility with 8-series chipsets and older. The main issue here is that the motherboard OEMs aren't usually willing to support older chipsets in the form of BIOS updates and the SSD 750 (and NVMe in general) requires some BIOS modifications in order to be bootable. That said, some older motherboards may work with the SSD 750 just fine, but I suggest you do some research online or contact the motherboard manufacturer before pulling the trigger on the SSD 750.

Bootable? Yes

Understandably the big question many of you have is whether the SSD 750 can be used as a boot drive. I've confirmed that the drive is bootable in my testbed with ASUS Z97 Deluxe motherboard with the latest BIOS and it should be bootable on any motherboard with proper NVMe support. Intel will have a list of supported motherboards on the SSD 750 product page, which are all X99 and Z97 based at the moment but the support will likely expand over time (it's up to the motherboard manufacturers to release a BIOS version with NVMe support).

Furthermore, I know many of you want to see some actual real world tests that compare NVMe to SATA drives and I'm working on a basic test suite to cover that. Unfortunately, I didn't have the time to include it in this review due to this and last weeks' NDAs, but I will publish it as a separate article as soon as it's done. If there are any specific tests that you would like to see, feel free to make suggestions in the comments below and I'll see what I can do.

Performance Consistency

We've been looking at performance consistency since the Intel SSD DC S3700 review in late 2012 and it has become one of the cornerstones of our SSD reviews. Back in the days many SSD vendors were only focusing on high peak performance, which unfortunately came at the cost of sustained performance. In other words, the drives would push high IOPS in certain synthetic scenarios to provide nice marketing numbers, but as soon as you pushed the drive for more than a few minutes you could easily run into hiccups caused by poor performance consistency.

Once we started exploring IO consistency, nearly all SSD manufacturers made a move to improve consistency and for the 2015 suite, I haven't made any significant changes to the methodology we use to test IO consistency. The biggest change is the move from VDBench to Iometer 1.1.0 as the benchmarking software and I've also extended the test from 2000 seconds to a full hour to ensure that all drives hit steady-state during the test.

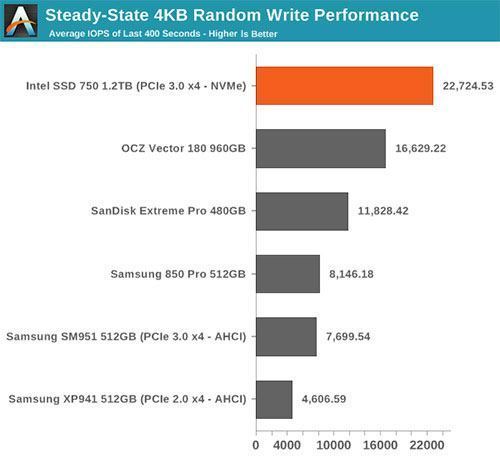

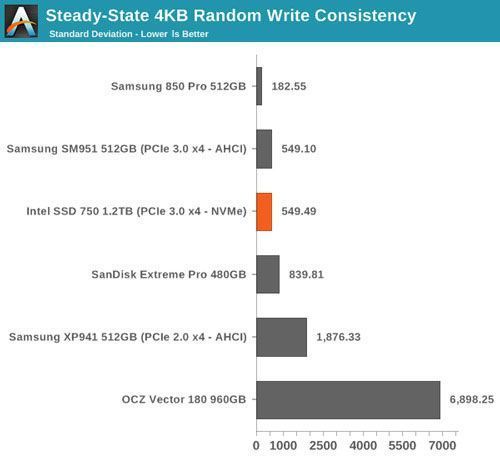

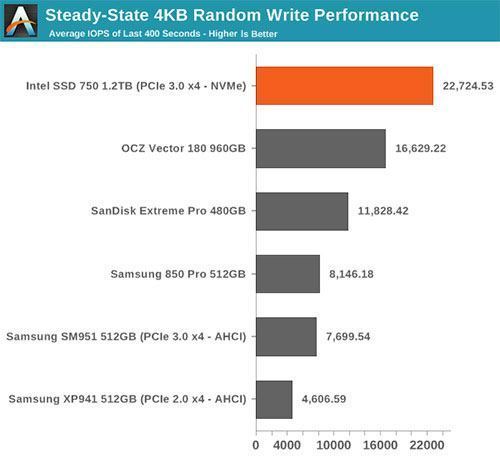

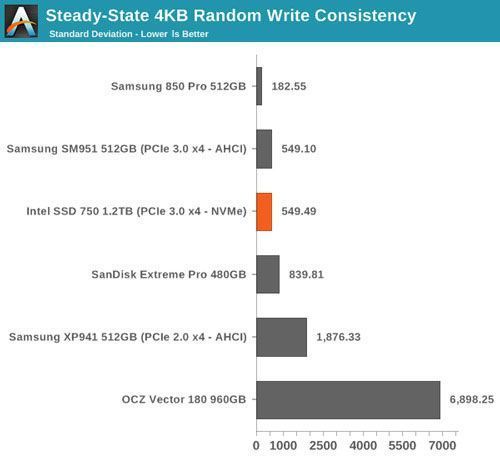

For better readability, I now provide bar graphs with the first one being an average IOPS of the last 400 seconds and the second graph displaying the standard deviation during the same period. Average IOPS provides a quick look into overall performance, but it can easily hide bad consistency, so looking at standard deviation is necessary for a complete look into consistency.

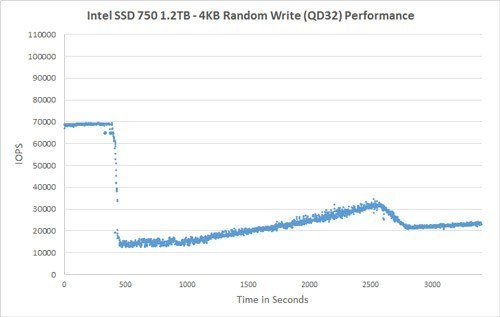

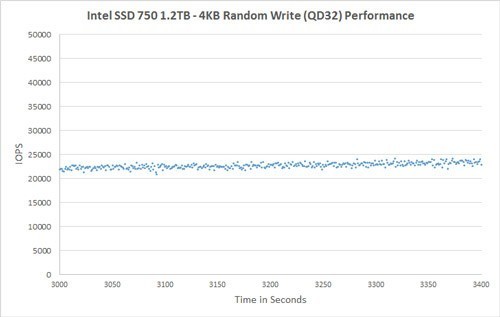

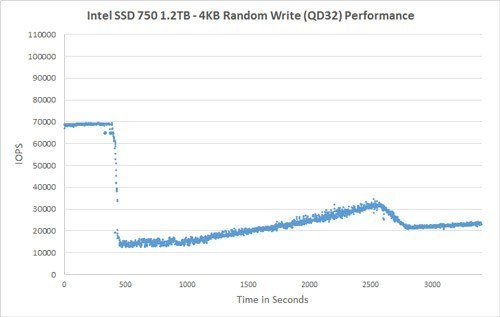

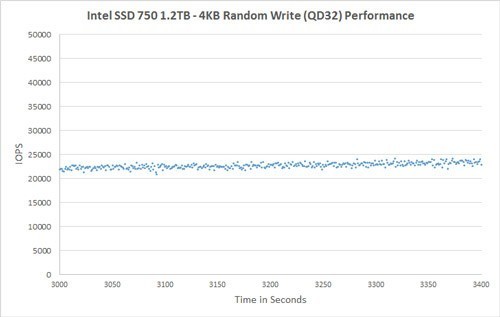

I'm still providing the same scatter graphs too, of course. However, I decided to dump the logarithmic graphs and go linear-only since logarithmic graphs aren't as accurate and can be hard to interpret for those who aren't familiar with them. I provide two graphs: one that includes the whole duration of the test and another that focuses on the last 400 seconds of the test to get a better scope into steady-state performance.

Given the higher over-provisioning and an enterprise-oriented controller, it's no surprise that the SSD 750 has excellent steady-state random write performance.

The consistency is also very good, although the SSD 750 can't beat the 850 Pro if just focusing on consistency. When considering that the SSD 750 provides nearly three times the performance, it's clear that the SSD 750 is better out of the two.

At the initial cliff the performance drops to around 15K IOPS, but it quickly rises and seems to even out at about 22-23K IOPS. It actually takes nearly an hour for the SSD 750 to reach steady-state, which isn't uncommon for such a large drive but it's still notable.

I couldn't run tests with added over-provisioning because NVMe drives don't support the usual ATA commands that I use to limit the LBA of the drive. There is similar command set for NVMe as well, but I'm still trying to figure out how to use them as there's isn't too much public info about NVMe tools.

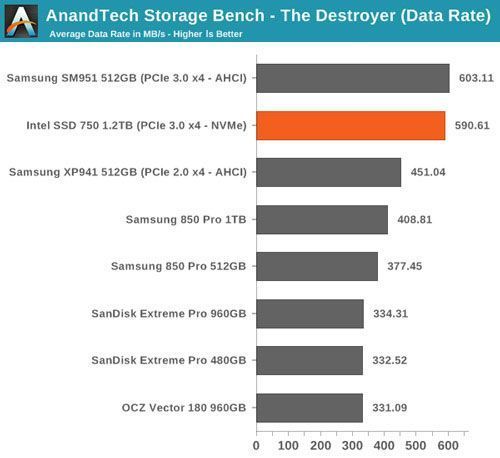

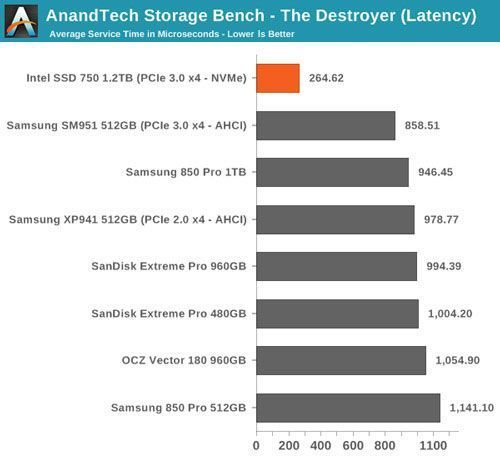

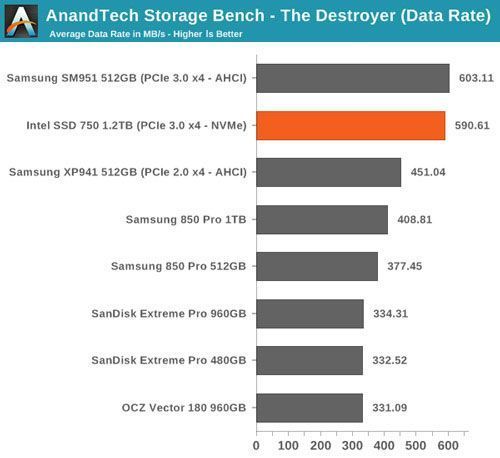

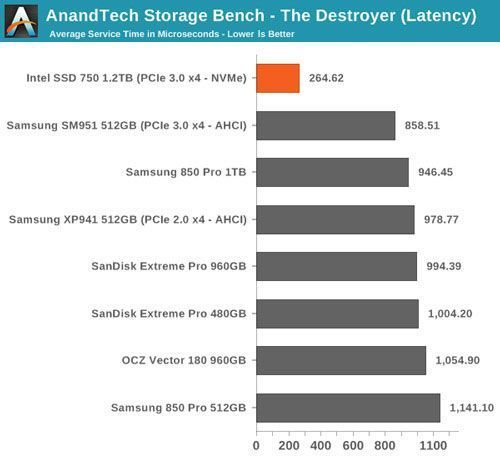

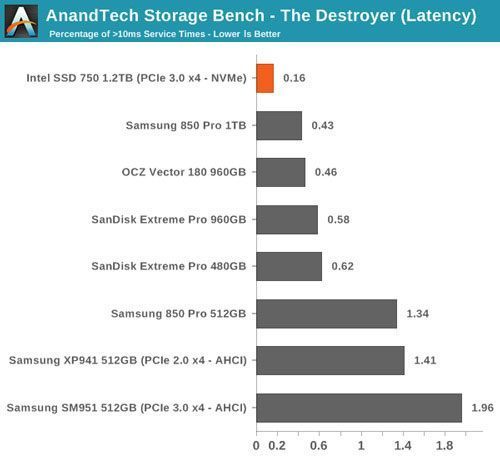

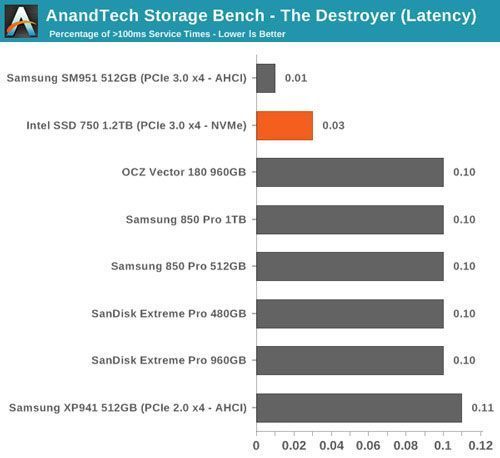

AnandTech Storage Bench - The Destroyer

The Destroyer has been an essential part of our SSD test suite for nearly two years now. It was crafted to provide a benchmark for very IO intensive workloads, which is where you most often notice the difference between drives. It's not necessarily the most relevant test to an average user, but for anyone with a heavier IO workload The Destroyer should do a good job at characterizing performance.

The table above describes the workloads of The Destroyer in a bit more detail. Most of the workloads are run independently in the trace, but obviously there are various operations (such as backups) in the background.

The name Destroyer comes from the sheer fact that the trace contains nearly 50 million IO operations. That's enough IO operations to effectively put the drive into steady-state and give an idea of the performance in worst case multitasking scenarios. About 67% of the IOs are sequential in nature with the rest ranging from pseudo-random to fully random.

I've included a breakdown of the IOs in the table above, which accounts for 95.8% of total IOs in the trace. The leftover IO sizes are relatively rare in between sizes that don't have a significant (>1%) share on their own. Over a half of the transfers are large IOs with one fourth being 4KB in size.

Despite the average queue depth of 5.5, a half of the IOs happen at queue depth of one and scenarios where the queue depths is higher than 10 are rather infrequent.

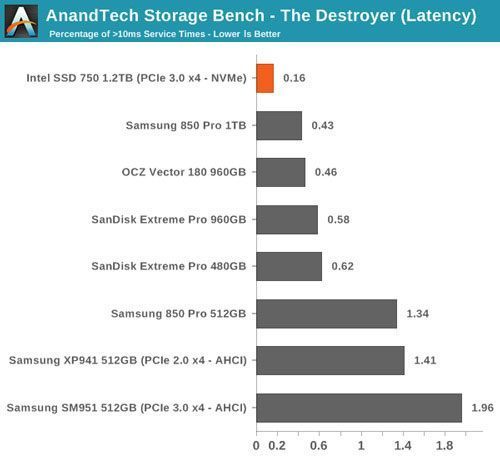

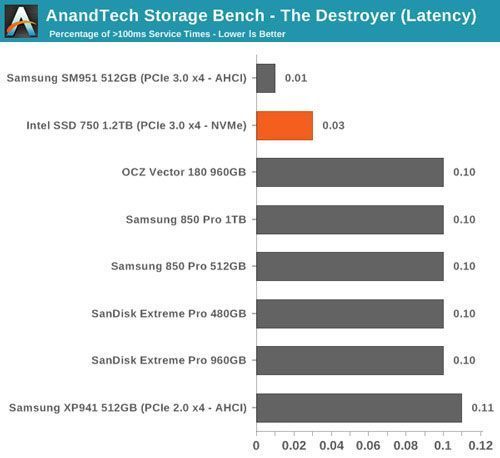

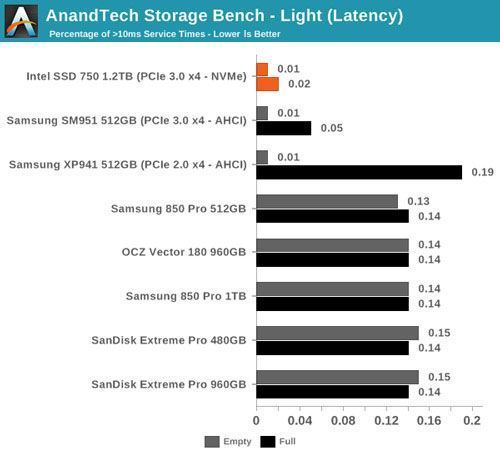

The two key metrics I'm reporting haven't changed and I'll continue to report both data rate and latency because the two have slightly different focuses. Data rate measures the speed of the data transfer, so it emphasizes large IOs that simply account for a much larger share when looking at the total amount of data. Latency, on the other hand, ignores the IO size, so all IOs are given the same weight in the calculation. Both metrics are useful, although in terms of system responsiveness I think the latency is more critical. As a result, I'm also reporting two new stats that provide us a very good insight to high latency IOs by reporting the share of >10ms and >100ms IOs as a percentage of the total.

In terms of throughput, the SSD 750 is actually marginally slower than the SM951, although when you look at latency the SD 750 wins by a large margin. The difference in these scores is explained by Intel's focus on random performance as Intel specifically optimized the firmware for high random IO performance, which does have some impact on the sequential performance. As I've explained above, data rate has more emphasis on large IO size transfers, whereas latency treats all IOs the same regardless of their size.

The number of high latency IOs is also excellent and in fact the best we have tested. The SSD 750 is without a doubt a very consistent drive.

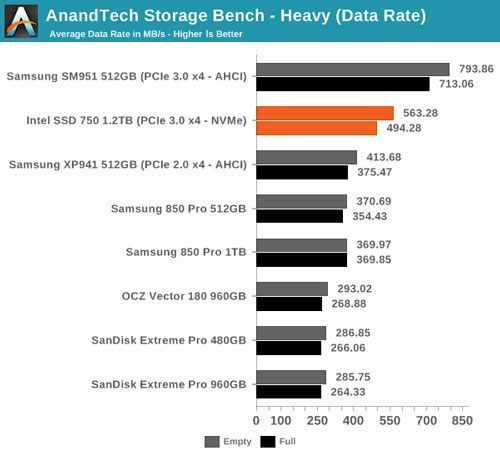

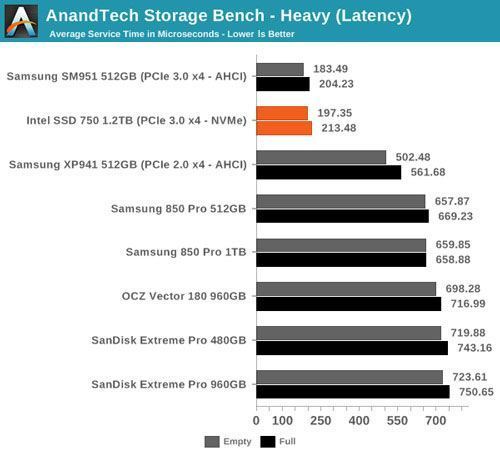

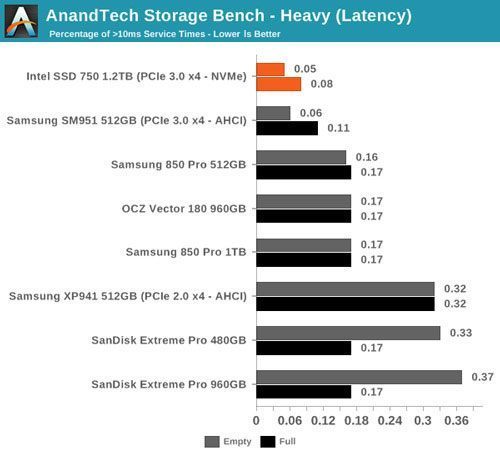

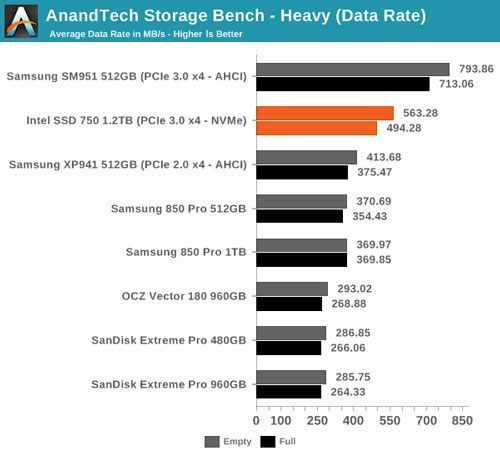

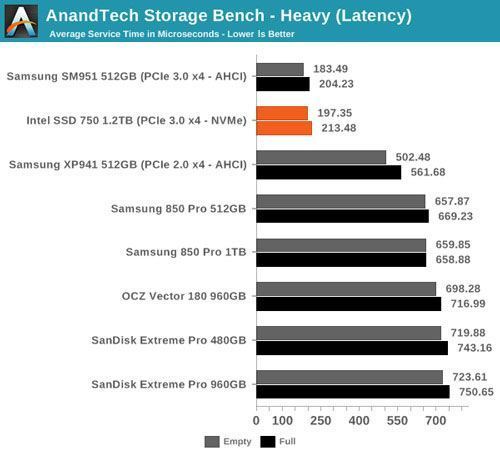

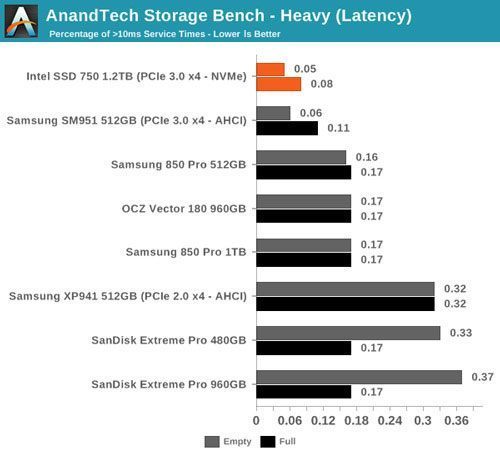

AnandTech Storage Bench - Heavy

While The Destroyer focuses on sustained and worst-case performance by hammering the drive with nearly 1TB worth of writes, the Heavy trace provides a more typical enthusiast and power user workload. By writing less to the drive, the Heavy trace doesn't drive the SSD into steady-state and thus the trace gives us a good idea of peak performance combined with some basic garbage collection routines.

The Heavy trace drops virtualization from the equation and goes a bit lighter on photo editing and gaming, making it more relevant to the majority of end-users.

The Heavy trace is actually more write-centric than The Destroyer is. A part of that is explained by the lack of virtualization because operating systems tend to be read-intensive, be that a local or virtual system. The total number of IOs is less than 10% of The Destroyer's IOs, so the Heavy trace is much easier for the drive and doesn't even overwrite the drive once.

The Heavy trace has more focus on 16KB and 32KB IO sizes, but more than half of the IOs are still either 4KB or 128KB. About 43% of the IOs are sequential with the rest being slightly more full random than pseudo-random.

In terms of queue depths the Heavy trace is even more focused on very low queue depths with three fourths happening at queue depth of one or two.

I'm reporting the same performance metrics as in The Destroyer benchmark, but I'm running the drive in both empty and full states. Some manufacturers tend to focus intensively on peak performance on an empty drive, but in reality the drive will always contain some data. Testing the drive in full state gives us valuable information whether the drive loses performance once it's filled with data.

It turns out that the SM951 is overall faster than the SSD 750 in our heavy trace as it beats the SSD 750 in both data rate and average latency. I was expecting the SSD 750 to do better due to NVMe, but it looks like the SM951 is a very capable drive despite lacking NVMe (although there appears to be an NVMe version too after all). On the other hand, I'm not too surprised because the SM951 has specifically been built for client workloads, whereas the SSD 750 has an enterprise heritage and even on the client side it's designed for the most intensive workloads.

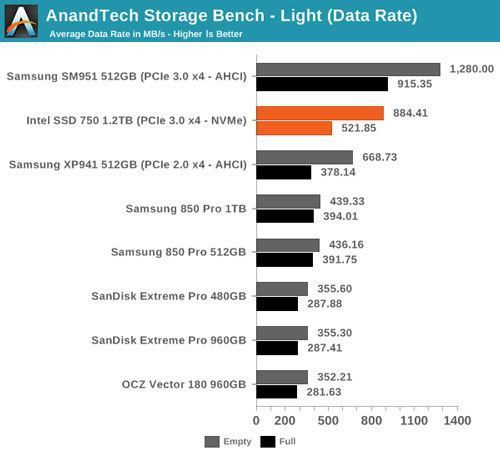

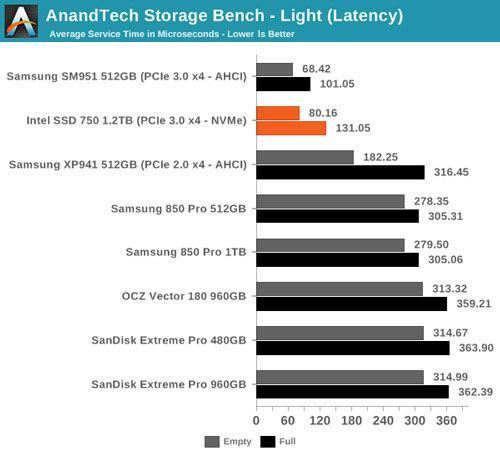

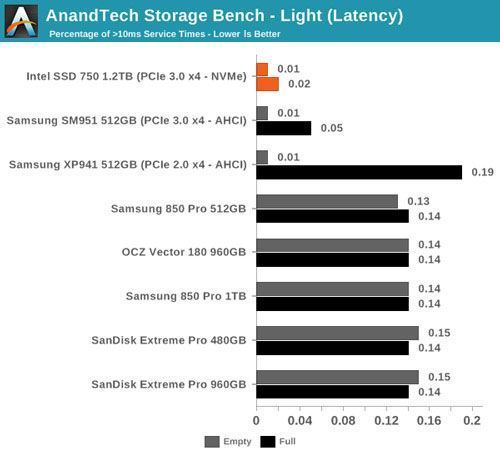

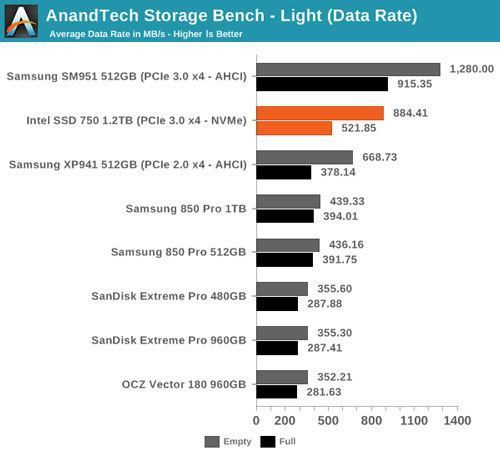

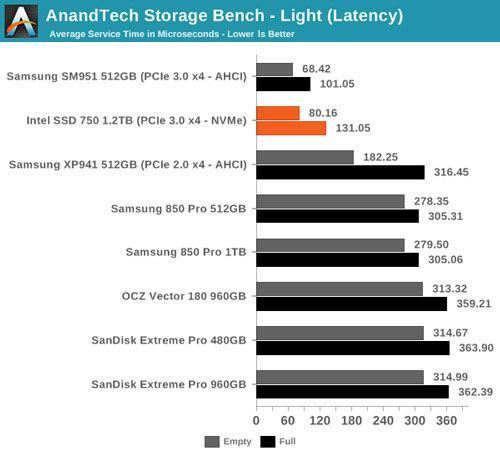

AnandTech Storage Bench - Light

The Light trace is designed to be an accurate illustration of basic usage. It's basically a subset of the Heavy trace, but we've left out some workloads to reduce the writes and make it more read intensive in general.

The Light trace still has more writes than reads, but a very light workload would be even more read-centric (think web browsing, document editing, etc). It has about 23GB of writes, which would account for roughly two or three days of average usage (i.e. 7-11GB per day).

The IO distribution of the Light trace is very similar to the Heavy trace with slightly more IOs being 128KB. About 70% of the IOs are sequential, though, so that is a major difference compared to the Heavy trace.

Over 90% of the IOs have a queue depth of one or two, which further proves the importance of low queue depth performance.

The same trend continues in our Light trace where the SM951 is still the king of the hill. It's obvious that Intel didn't design the SSD 750 with such light workloads in mind as ultimately you need to have a relatively IO intensive workload to get the full benefit of PCIe and NVMe.

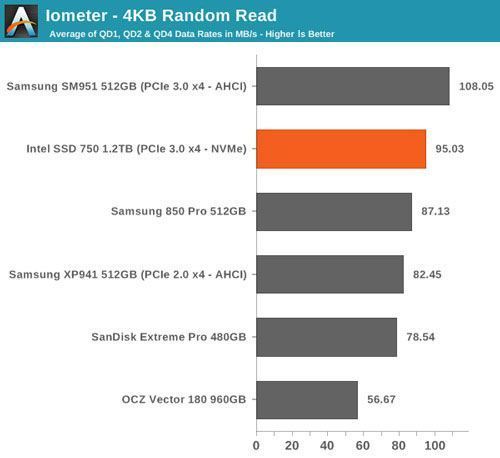

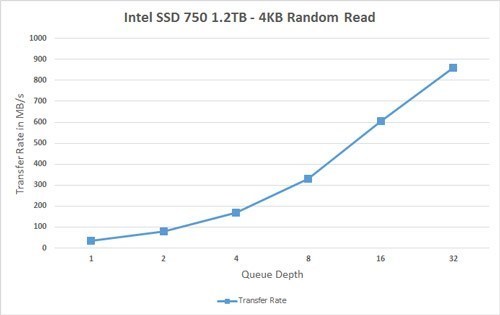

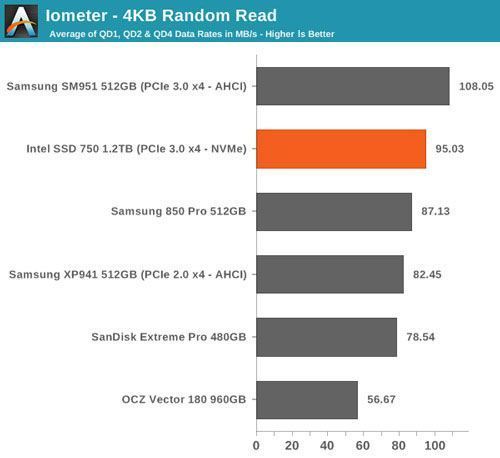

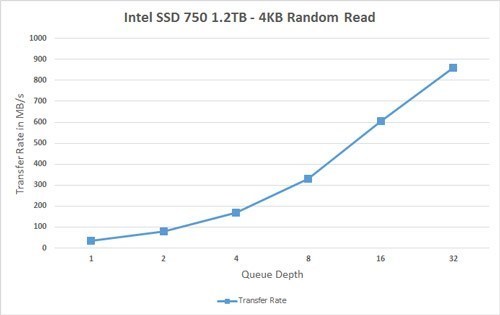

Random Read Performance

One of the major changes in our 2015 test suite is the synthetic iometer tests we run. In the past we used to test just one or two queue depths, but real world workloads always contain a mix of different queue depths as shown by our Storage Bench traces. To get the full scope of performance, I'm now testing various queue depths starting from one and going all the way to up to 32. I'm not testing every single queue depth, but merely how the throughput scales with the queue depth. I'm using exponential scaling, meaning that the tested queue depths increase in powers of two (i.e. 1, 2, 4, 8...).

Read tests are conducted on a full drive because that is the only way to ensure that the results are valid (testing with an empty drive can substantially inflate the results and in reality the data you are reading is always valid rather than full of zeros). Each queue depth is tested for three minutes and there is no idle time between the tests.

I'm also reporting two metrics now. For the bar graph, I've taken the average of QD1, QD2 and QD4 data rates, which are the most relevant queue depths for client workloads. This allows for easy and quick comparison between drives. In addition to the bar graph, I'm including a line graph, which shows the performance scaling across all queue depths. To keep the line graphs readable, each drive has its own graph, which can be selected from the drop-down menu.

I'm also plotting power for SATA drives and will be doing the same for PCIe drives as soon as I have the system set up properly. Our datalogging multimeter logs power consumption every second, so I report the average for every queue depth to see how the power scales with the queue depth and performance.

Despite having NVMe, the SSD 750 doesn't bring any improvements to low queue depth random read performance. Theoretically NVMe should be able to improve low QD random read performance because it adds less overhead compared to the AHCI software stack, but ultimately it's the NAND performance that's the bottleneck, although 3D NAND will improve that by a bit.

The performance does scale nicely, though, and at a queue depth of 32 the SSD 750 is able to hit over 200K IOPS. It's capable of delivering even more than that because unlike AHCI, NVMe can support more than 32 commands in the queue, but since client workloads rarely go above QD32 I see no point in testing higher queue depths just for the sake of high numbers.

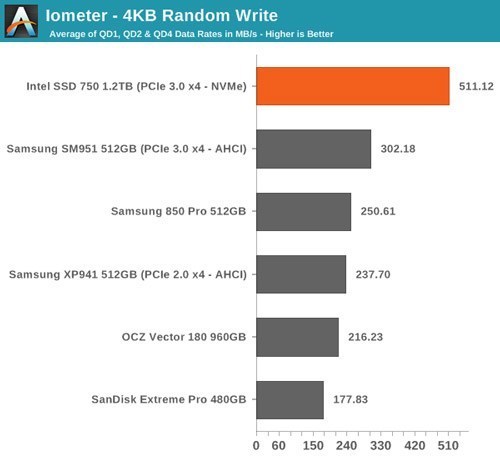

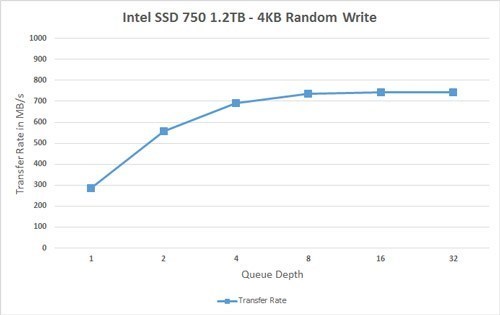

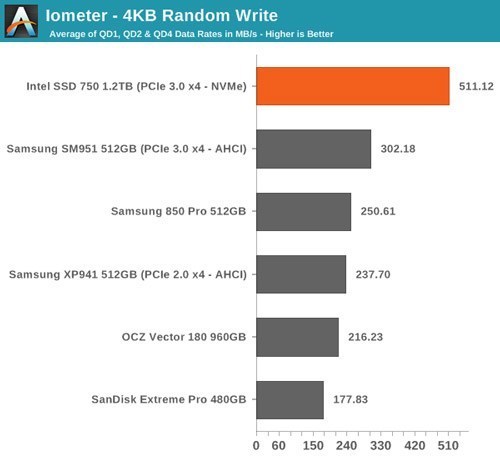

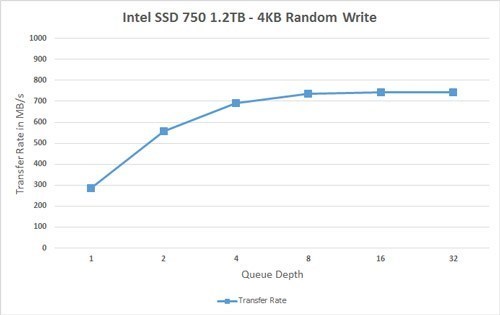

Random Write Performance

Write performance is tested in the same way as read performance, except that the drive is in a secure erased state and the LBA span is limited to 16GB. We already test performance consistency separately, so a secure erased drive and limited LBA span ensures that the results here represent peak performance rather than sustained performance.

In random write performance the SSD 750 dominates the other drives. It seems Intel's random IO optimization really shows up here because the SM951 doesn't even come close. Obviously the lower latency of NVMe helps tremendously and since the SSD 750 features full power loss protection it can also cache more data in DRAM without the risk of data loss, which yields substantial performance gains.

The SSD 750 also scales very efficiently and doesn't stop scaling until queue depth of 8. Note how big the difference is at queue depths of 1 and 2 -- for any random write centric workload the SSD 750 is an absolute killer.

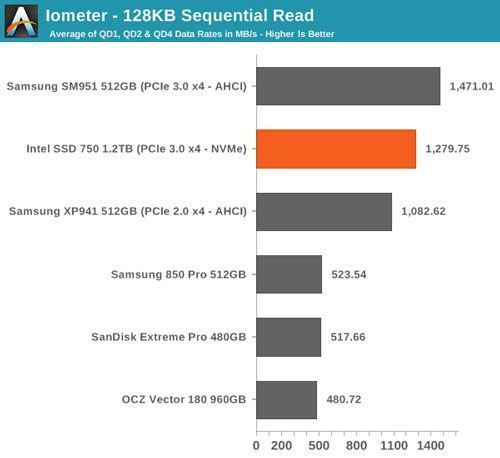

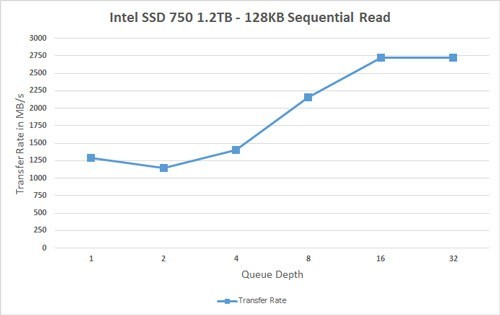

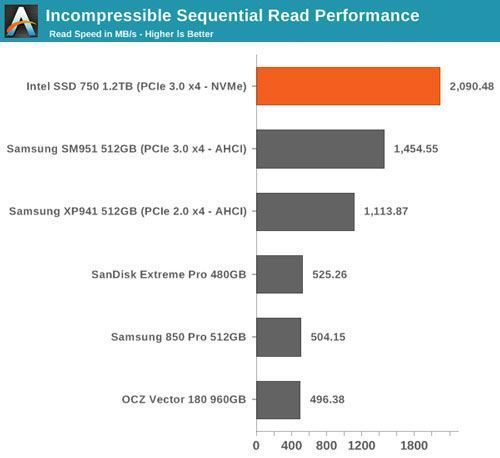

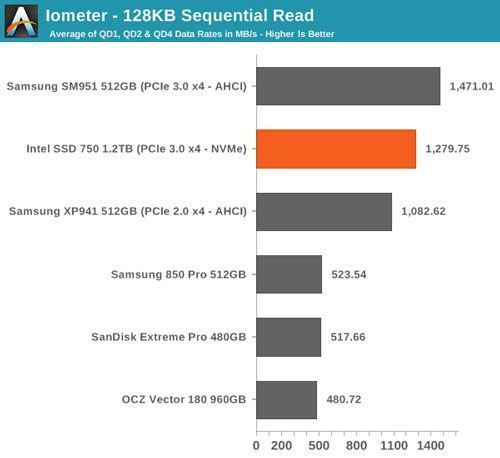

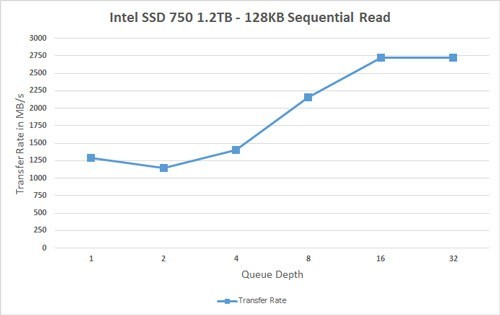

Sequential Read Performance

Our sequential tests are conducted in the same manner as our random IO tests. Each queue depth is tested for three minutes without any idle time in between the tests and the IOs are 4K-aligned similar to what you would experience in a typical desktop OS.

In sequential read performance the SM951 keeps its lead. While the SSD 750 reaches up to 2.75GB/s at high queue depths, the scaling at small queue depths is very poor. I think this is an area where Intel should have put in a little more effort because it would translate to better performance in more typical workloads.

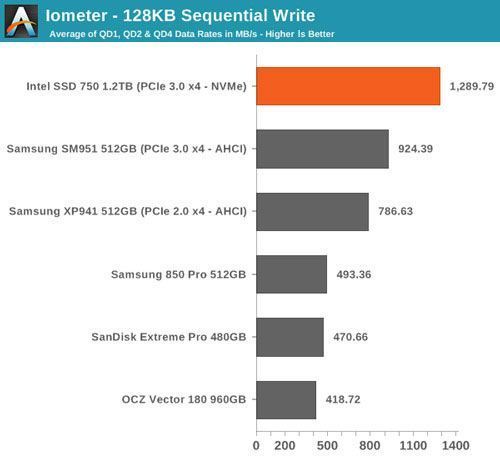

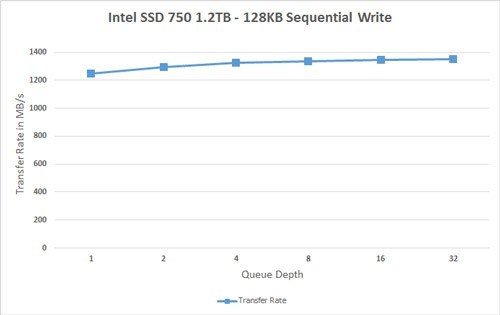

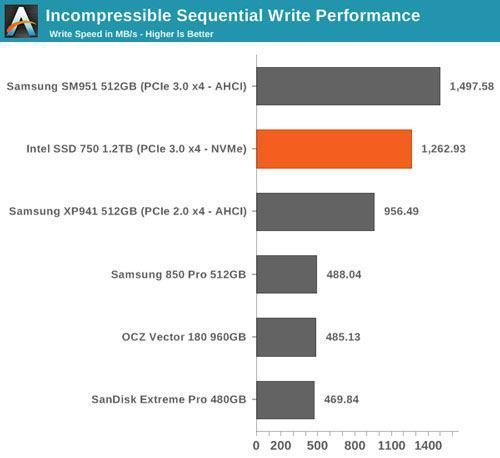

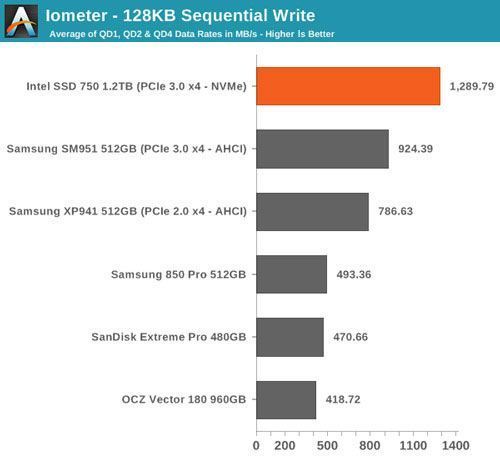

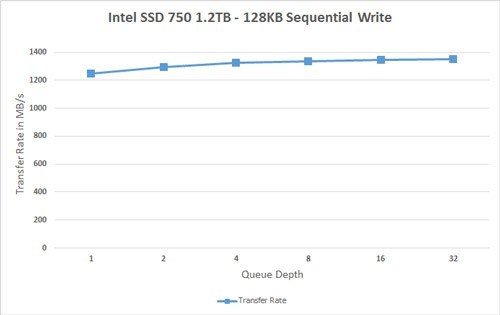

Sequential Write Performance

Sequential write testing differs from random testing in the sense that the LBA span is not limited. That's because sequential IOs don't fragment the drive, so the performance will be at its peak regardless.

The SSD 750 is faster in sequential writes than the SM951, but the difference isn't all that big when you take into account that the SSD 750 has more than twice the amount of NAND. The SM951 did have some throttling issues as you can see in the graph below and I bet that with a heatsink and proper cooling the two would be quite identical because at queue depth of 1 the SSD 750 is only marginally faster. It's again a bit disappointing that the SSD 750 isn't that well optimized for sequential IO because there's prcatically no scaling at all and the drive maxes out at ~1350MB/s.

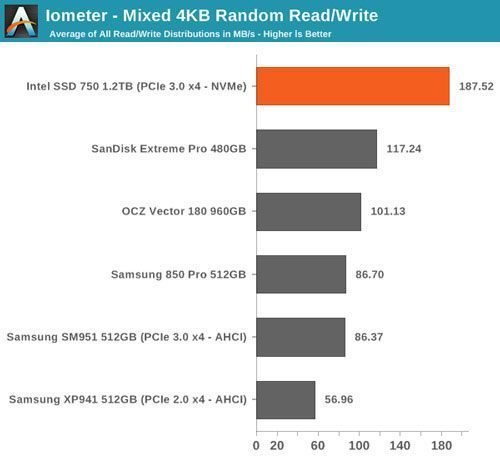

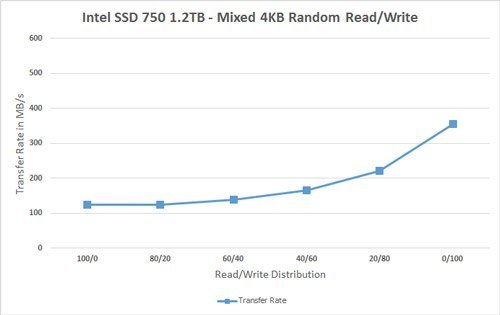

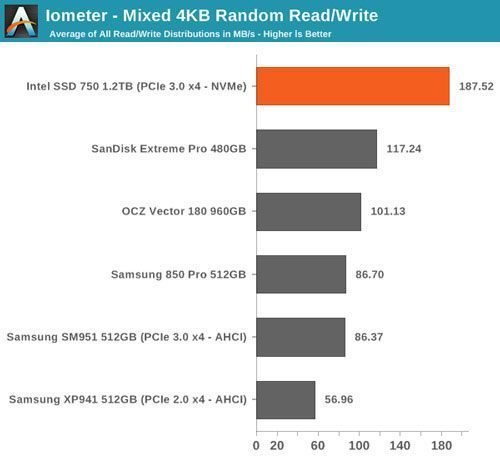

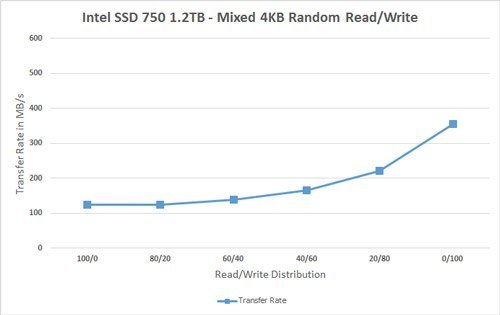

Mixed Random Read/Write Performance

Mixed read/write tests are also a new addition to our test suite. In real world applications a significant portion of workloads are mixed, meaning that there are both read and write IOs. Our Storage Bench benchmarks already illustrate mixed workloads by being based on actual real world IO traces, but until now we haven't had a proper synthetic way to measure mixed performance.

The benchmark is divided into two tests. The first one tests mixed performance with 4KB random IOs at six different read/write distributions starting at 100% reads and adding 20% of writes in each phase. Because we are dealing with a mixed workload that contains reads, the drive is first filled with 128KB sequential data to ensure valid results. Similarly, because the IO pattern is random, I've limited the LBA span to 16GB to ensure that the results aren't affected by IO consistency. The queue depth of the 4KB random test is three.

Again, for the sake of readability, I provide both an average-based bar graph as well as a line graph with the full data on it. The bar graph represents an average of all six read/write distribution data rates for quick comparison, whereas the line graph includes a separate data point for each tested distribution.

The SSD 750 does very well in mixed random workloads, especially when compared to the SM951 that is slower than most high-end SATA drives. The performance scales quite nicely as the portion of writes is increased.

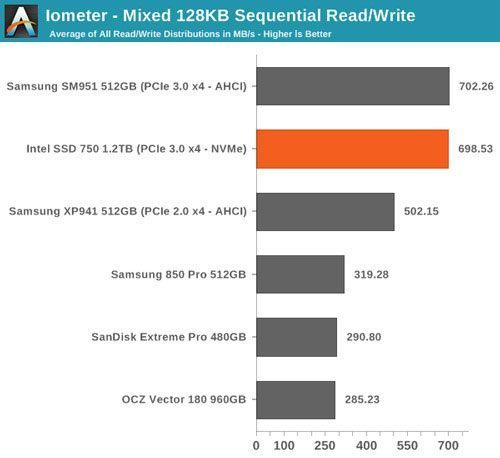

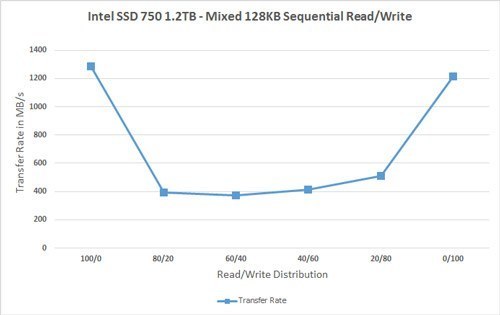

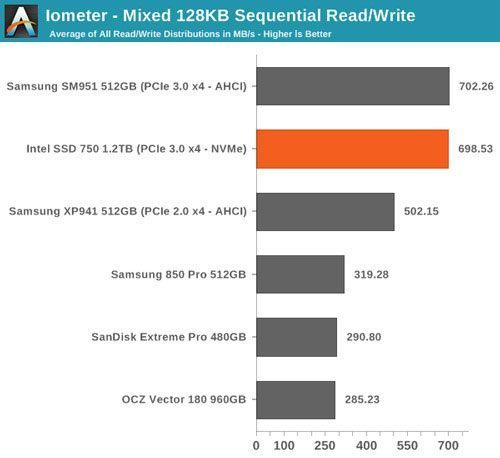

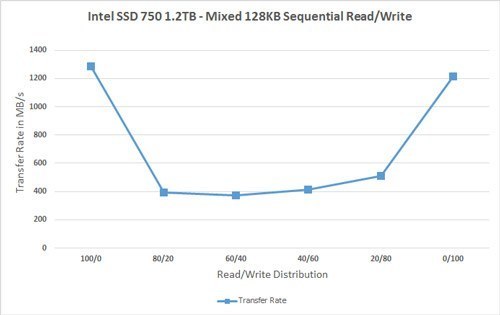

Mixed Sequential Read/Write Performance

The sequential mixed workload tests are also tested with a full drive, but I've not limited the LBA range as that's not needed with sequential data patterns. The queue depth for the tests is one.

In mixed sequential workloads, however, the SSD 750 and SM951 are practically indentical. Both deliver excellent performance at 100% reads and writes, but the performance does drop significantly once reads and writes are mixed. Even with the drop, the two push out 400MB/s whereas most SATA drives manage ~200MB/s, so PCIe certainly has a big advantage here.

ATTO - Transfer Size vs Performance

I'm keeping our ATTO test around because it's a tool that can easily be run by anyone and it provides a quick look into performance scaling across multiple transfer sizes. I'm providing the results in a slightly different format because the line graphs didn't work well with multiple drives and creating the graphs was rather painful since the results had to be manually inserted cell by cell as ATTO doesn't provide a 'save as CSV' functionality.

AS-SSD Incompressible Sequential Performance

I'm also keeping AS-SSD around as it's freeware like ATTO and can be used by our readers to confirm that their drives operate properly. AS-SSD uses incompressible data for all of its transfers, so it's also a valuable tool when testing SandForce based drives that perform worse with incompressible data.

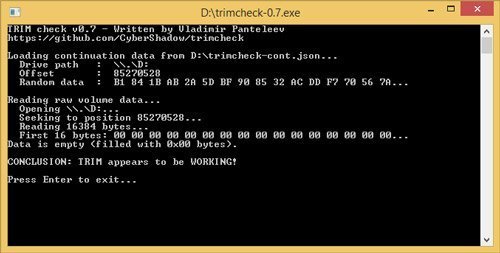

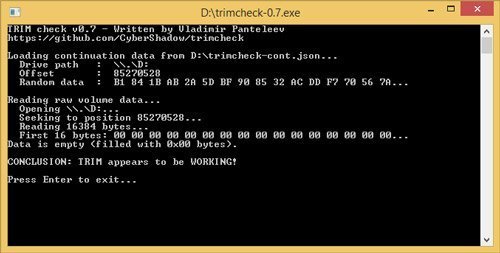

TRIM Validation

The move from Windows 7 to 8.1 introduced some problems with the methodology we have previously used to test TRIM functionality, so I had to come up with a new way to test. I tested a couple of different methods, but ultimately I decided to go with the easiest one that can actually be used by anyone. The software is simply called trimcheck and it was made by a developer that goes by the name CyberShadow in GitHub.

Trimcheck tests TRIM by creating a small, unique file and then deleting it. Next the program will check whether the data is still accessible by reading the raw LBA locations. If the data that is returned by the drive is all zeros, it has received the TRIM command and TRIM is functional.

And as expected TRIM appears to be working.

Final Words

For years Intel has been criticized for not caring about the client SSD space anymore. The X25-M and its different generations were all brilliant drives and essentially defined the standards for a good client SSD, but since then none of Intel's client SSDs have had the same "wow" effect. That's not to say that Intel's later client SSDs have been inferior, it's just that they haven't really had any competitive advantage over the other drives on the market. It's no secret that Intel changed its SSD strategy to focus on the enterprise segment and frankly it still makes a lot of sense because the profits are more lucrative and enterprise has a lot more room for innovation as the customers value more than just rock-bottom pricing.

With the release of the SSD 750, it's safe to say that any argument of Intel not caring about the client market is now invalid. Intel does care, but rather than bringing products with new complex technologies to the market at a very early stage, Intel wants to ensure that the market is ready and there's industry-wide support for the product. After all, NVMe requires BIOS support and that support has only been present for a few months now, making it logical not to release the SSD 750 any sooner.

Given the enterprise background of the SSD 750, it's more optimized for consistency than raw peak performance. The SM951, on the other hand, is a more generic client drive that concentrates on peak performance to improve performance under typical client workloads. That's visible in our benchmarks because the only test where the SSD 750 is able to beat the SM951 is The Destoyer trace, which illustrates a very IO intensive workload that only applies to power users and professionals. It makes sense for Intel to focus on that very specific target group because those are the people who are willing to pay premium for higher storage performance.

With that said, I'm not sure if I fully agree with Intel's heavy random IO focus. The sequential performance isn't bad, but I think the SSD 750 as it stands today is a bit unbalanced and could use some improvements to sequential performance even if it came at the cost of random performance.

RamCity actually just got its first batch of SM951s this week, so I've included it in the table for comparison (note that the prices on RamCity's website are in AUD, so I've translated them into USD and also subtracted the 10% tax that is only applicable to Australian orders). The SSD 750 is fairly competitive in price, although obviously you have to fork out more money than you would for a similar capacity SATA drive. Nevertheless, going under a dollar per gigabyte is very reasonable given the performance and full power loss protection that you get with the SSD 750.

All in all, the SSD 750 is definitely a product I recommend as it's the fastest drive for IO intensive workloads by a large margin. I can't say it's perfect and for slightly lighter IO workloads the SM951 wins my recommendation due to its more client-oriented design, but the SSD 750 is really a no compromise product that is aimed for a relatively small high-end niche, and honestly it's the only considerable option in its niche. If your IO workload needs the storage performance of tomorrow, Intel and the SSD 750 have you covered today.

Written by Kristian Vatto of AnandTech on April 2, 2015 12:00 PM

Intel Unleashes NVMe SSD 750 Series For Consumer PCs

The PC storage world was undeniably boring, and spinning, before SSDs came along. SSDs were expensive and somewhat exotic at first, but still worth every penny. SSDs deliver revolutionary speed that unlocks the true potential of the CPU, and you cannot seriously claim to have a performance PC today if it doesn't have a slab of flash attached to the SATA port.

Therein lies the problem. The SATA connection was designed for old spinning HDDs and not optimized to provide the ultra-low latency and blistering speed that SSDs crave. SSDs outstripped the limitations of the trusty old SATA port over the last few years, and prices have dropped to the point of commoditization. New SSDs are only making small incremental gains with each release, and it seems the days of big performance boosts are over.

...until NVMe came to save the day. NVMe is a refined interface designed from the ground up for non-volatile memories, which includes DRAM and future PCM/MRAM, and it runs over the inherently faster PCIe bus. In other words, it's more than fast enough for a bunch of NAND chips packed onto a PCB. Unfortunately, NVMe made its debut in the enterprise space. Salivating enthusiasts the world over had to live vicariously through enterprise hardware reviews, impatiently waiting for the day NVMe finally made its way to the desktop.

Intel has ended the waiting with the new NVMe Intel SSD 750 Series, designed for enthusiasts and gamers alike, that lays claim to the title of the fastest storagehardware available to consumers.

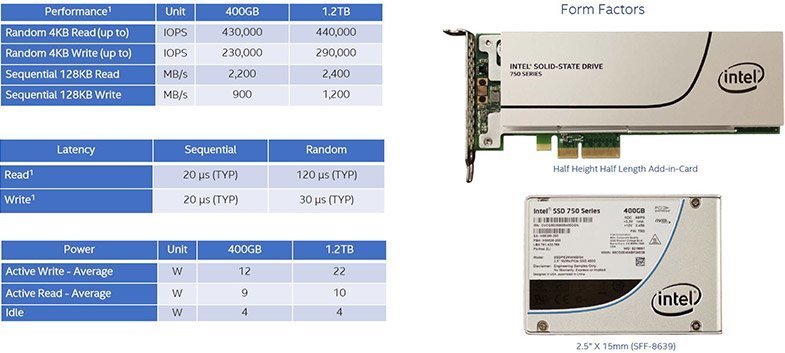

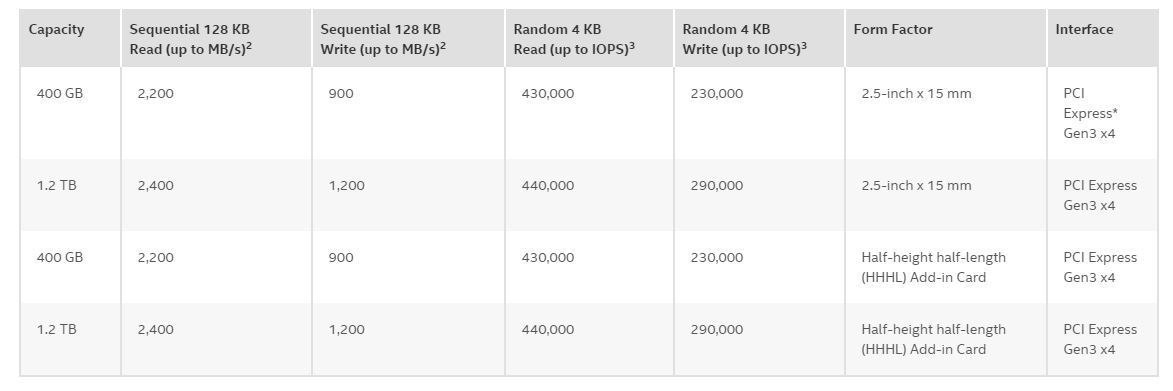

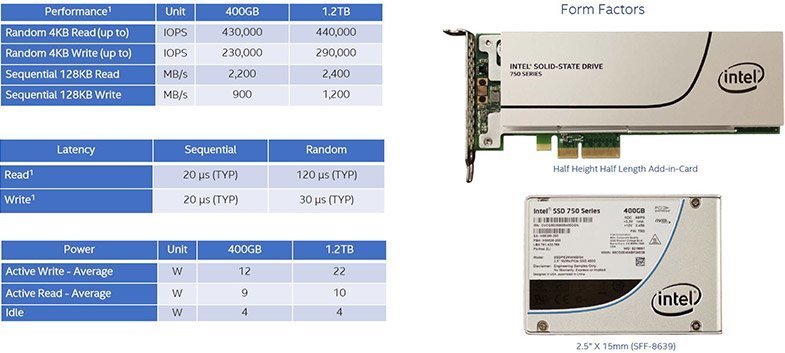

The 750 Series comes in two capacities of 400 GB and 1.2 TB and leverages a PCIe 3.0 x4 connection to blast out up to 2,400 MBps of sequential read speed and 1,200 MBps of sequential write speed (1.2 TB model). For IOPS, the 750 offers up to 440,000 read and 290,000 write. That is nearly quadruple the IOPS performance of anything else on the market, including the latest m.2 models.

The Intel 750 Series SSDs certainly aren't going to fit in your laptop, but Intel is turning the storage world on its ear by also offering 2.5" versions. These still communicate via PCIe 3.0 x4 and offer the same exceptional performance as the larger Add-In Cards (AIC). Most 2.5" SSDs are 7 mm thick, but the 750 Series are 15 mm and will leverage an SFF-8639-compatible connector to connect to special connectors on motherboards. The cable will connect to an SFF-8643 connector, and motherboard manufacturers already have embedded connectors coming to market. ASUS also has an m.2-to-8643 connector, and we expect others will use this approach as well.

The 750s are bootable with the UEFI stack, but Intel is only guaranteeing compatibility with Z97 and X99 platforms with Windows 7 64-bit (and up) at this time. This doesn't mean the drives will not work with all prior chipsets, just that compatibility (particularly for booting) will be spotty. Intel is compiling a compatibility matrix for older hardware that will be available to the public. There is the chance that motherboard vendors will update older UEFI stacks to be compatible with the new cards, but that remains to be seen.

The 750 Series is a re-purposed and slightly modified version of the Intel P3x00 DC (Data Center) Series. The 750 features Intel L85C 20 nm MLC NAND connected to a monstrous 18-channel controller, and 9 percent overprovisioning provides 70 GB of write endurance per day for the five-year warrantied period. This will be more than enough for the average consumer, and even enough for some prosumer applications.

What else could one possibly want? Well, a reasonable price is going to be at the top of the list for most. The 750 Series features an MSRP of $389 for the 400 GB model and $1,029 for the 1.2 TB. Perhaps the only oddity is the lack of an 800 GB model. With the amazingly low cost, especially when compared to the price of other PCIe SSDs with a fraction of the performance, Intel should have a winner on its hands.

There really is no other storage hardware on the market to even compare to the Intel 750; it simply doesn't get faster for consumers. Did I say fire-breathing? The 750 Series is available now, and at less than $1 per GB we hope Intel has a few million of these in stock. Look to these pages soon for our full review with real-word testing.

Written by Paul Alcorn of Tom's Hardware on April 2, 2015 9:00 AM

Intel Pushed SSD Limits with Latest 750 Drives

PCIe-based SSDs are becoming more and more popular with computer enthusiasts thanks to lower prices and superior bandwidth when compared with SATA drives. SSD manufacturers like OCZ have capitalized on this market by introducing the OCZ RevoDrive 350, which we liked so much that we included it in Jackhammer, our editorial PC.

Not one to shy away from the competition, Intel has released its latest and greatest PCIe SSD, the SSD 750 drives, which promise to deliver the best performance from Intel to date. Intel claims the drives are capable of 2,400MB/s sequential reads and 1,200MB/s sequential writes, which according to Intel are four times faster than SATA-based SSDs.

The two drives are available in 400GB and 1.2TB capacities, and command prices of $470 and $1,200 respectively.

Performance

While the selling point of the 1.2TB Intel SSD 750 drives will be their 2,400MB/s and 1,200MB/s Read and Write times, the 1.2TB version also promises to have a Read rating of 400,000 IOPS (Input/Output Operations Per Second) and a Write rating of 290,000 IOPS, which is a lot higher than the 135,000/140,000 Read/Write rating of the OCZ RevoDrive 350.

Although Intel hasn’t given different endurance ratings for either the 400GB or the 1.2TB model, it promises that its drives will have a MTBF (Mean Time between Failures) of 1.2 million hours, and that its drives are able to write up to 70GB per day for a total of 219TB of total writes.

As far as power consumption is concerned, the Intel 750 SSD isn’t much of an energy hog. According to TechSpot, the average draw of the 1.2TB version of the Intel 750 SSD was 22 watts while writing, 10 watts while reading, and 4 watts while idle.

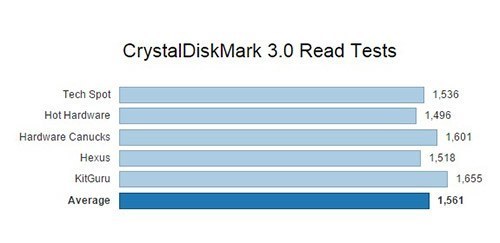

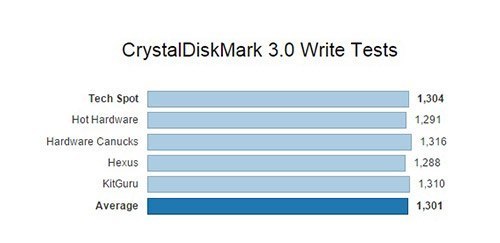

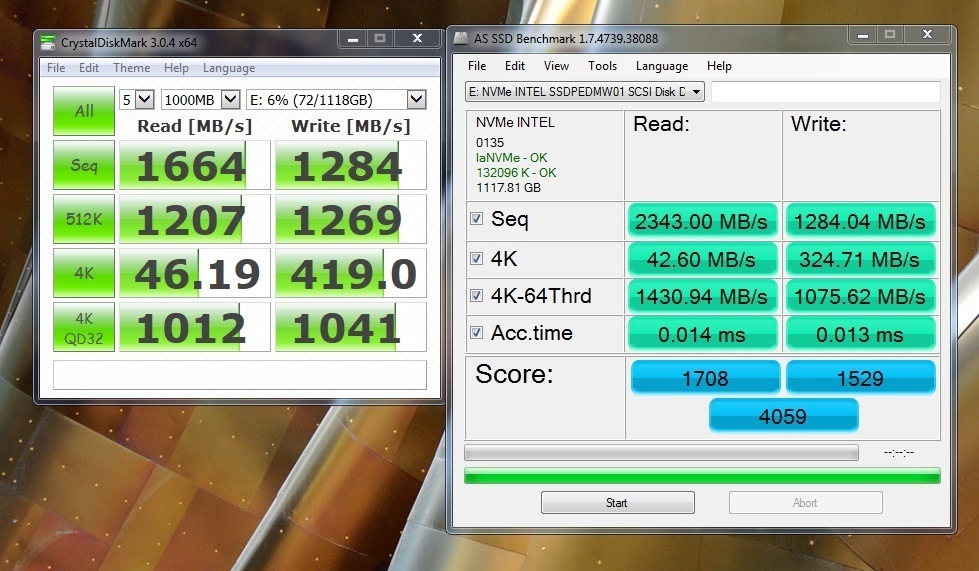

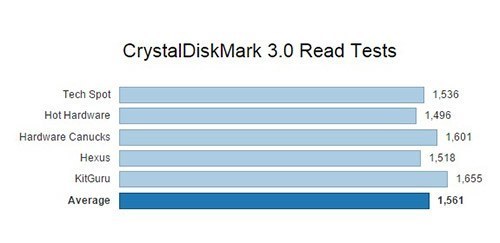

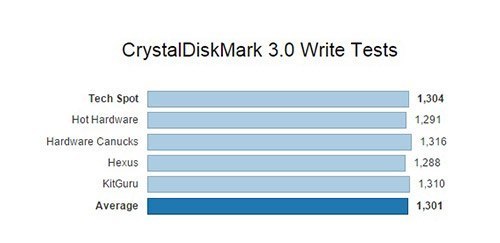

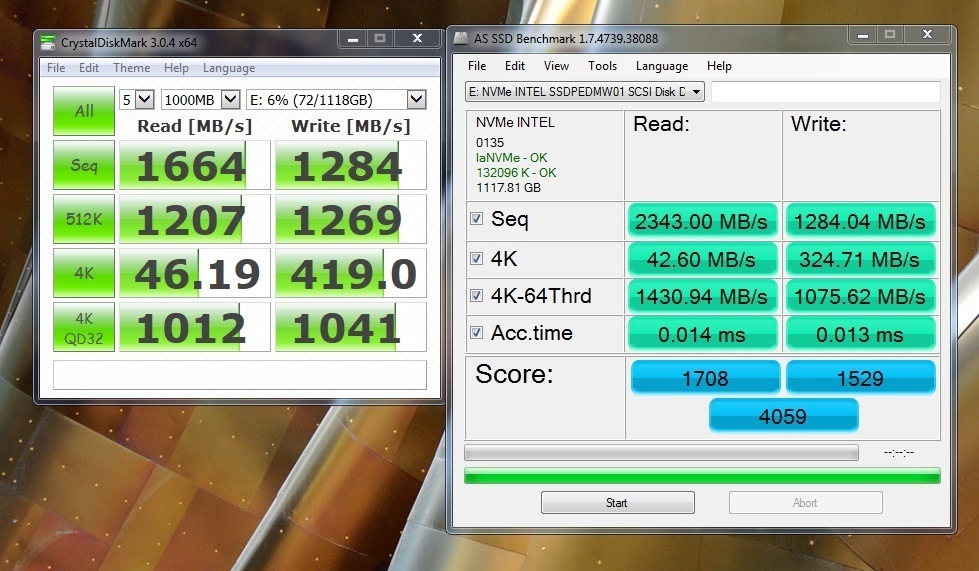

Intel’s numbers are certainly impressive, but it’s interesting to see how the SSDs perform with synthetic tests. We scoured the preliminary reviews from tech publications like Hot Hardware and KitGuru to see what the results were. For consistency’s sake, we decided to focus on the industry-standard CrystalDiskMark 3.0 sequential Read and Write tests to see how disks fared across the publications in question.

As the averages for both tests show, Intel has managed to beat out its own estimate on sequential Write performance with an extra 100 MB on average, while coming out to about 65% of the performance claims when Read operations are concerned.

While Intel’s numbers seem to be a little off when looking at the CrystalDiskMark 3.0 tests, we have to remember that the test itself is synthetic, and shouldn’t be substituted for real-world numbers.

The Takeaways

Intel’s latest SSD 750 drives bring enterprise-level performance at an enthusiast-friendly price. While there seems to be a pretty large gap between the 1.2TB and 400GB capacities (which may be filled in the future) Intel’s 400GB offering is great for someone who wants to create an extra-speedy boot drive and get stellar load times from resource-intensive applications like video games.

For professionals who need to use demanding video editing software, the 1.2TB solution will be entirely reasonable when taking into account the time saved with jaw-dropping 1,200MB+ write times. Lastly, the future-proofness of the Intel SSD 750 drives cannot be understated–with 70GB of possible writes per day and 219TB of total writes, these drives are good to go for many, many years.

Written by Karim Lahlou of GameCrate on April 6, 2015

Gaming with the Intel 750 SSDs

When looking at the basic read/write benchmarks for Intel’s new 750 series SSDs only one technical term comes to mind: bananas. The numbers are bananas.

The Urban Dictionary definition of something being bananas is to say that it is unbelievable, silly, balderdash. Since balderdash has fallen out of common usage, we’ll go with bananas.

A few weeks back I had the opportunity to speak with Jeff Frik, engineer for Intel, about these drives. We spoke specifically about the add-in card version that was tested for this article, as well as NVMe. “Non-Volatile Memory express,” he explained, “is a protocol and command set that was designed from the ground-up for non-volatile Memory storage.” NVMe cuts loose the shackles of the AHCI legacy. AHCI is the old protocol designed for spinning media. It was obvious to the engineers at Intel that a new protocol needed to be made. NVMe on the 750 SSDs was designed to reduce latency and increase parallelisms.

Intel has also decided to accommodate the small form factor builder with a 2.5” version of the drive. The development of this smaller drive proved fairly challenging as the limitations of SATA III would cut the drive off at the knees. Working with ASUS they developed the 8639 connector and the mini SAS-HD adapter, which ASUS has named the “Hyper Kit,” to allow the SSD to directly interface with the m.2 port on their motherboards. This allows the 2.5" drive the same access to PCIe 3.0 x4 that the add-in card is getting. This gives both SSDs 32 Gbit/s of bandwidth vs. 6 Gbit/s on SATA III. Bananas.

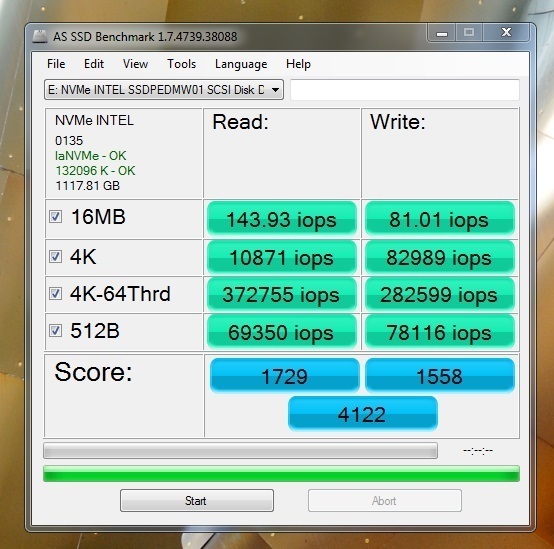

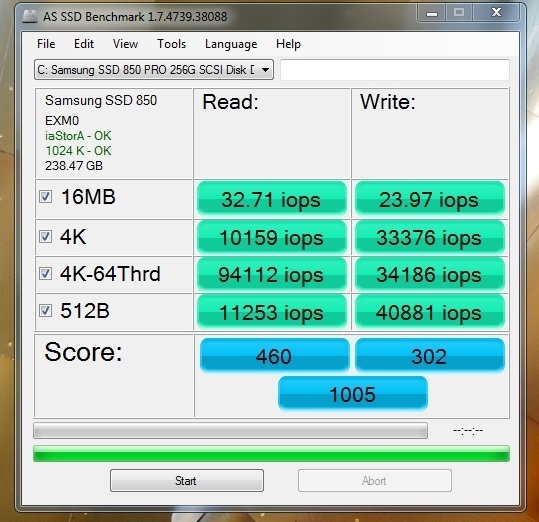

More bananas numbers can be seen from basic read write tests. Using AS SSD after the drive had around 50G of game data written to it, it demonstrated a sequential read of 2343.0 MB/s and a write of 1284.04 MB/s. I fully admit to giggling uncontrollably for about 30 seconds after that result came up. The access times are a near as makes no difference 0.014 ms and 0.013 ms. Each drive is using an 18 channel controller. Neat.

Here is a look at the published specs for both the add-in card and the 2.5” drive:

I was able to duplicate these numbers fairly closely on both CrystalDiskMark 3.0.4 x64 and AS SSD:

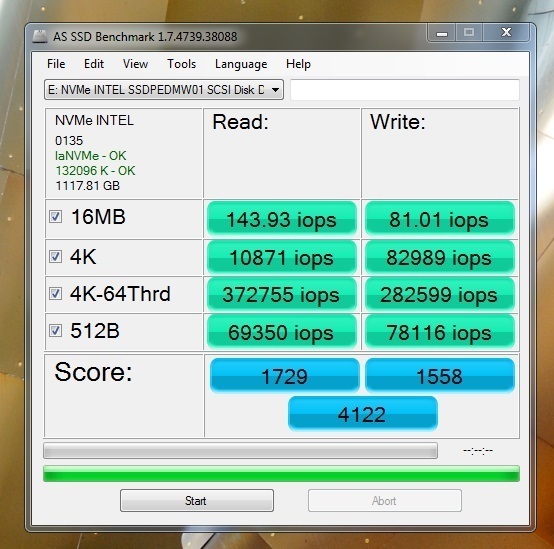

In iops:

Up against the competitors

These benchmark tools are great for just raw testing data, but only real world use cases will demonstrate if a given piece of hardware is going to be a benefit to a system.

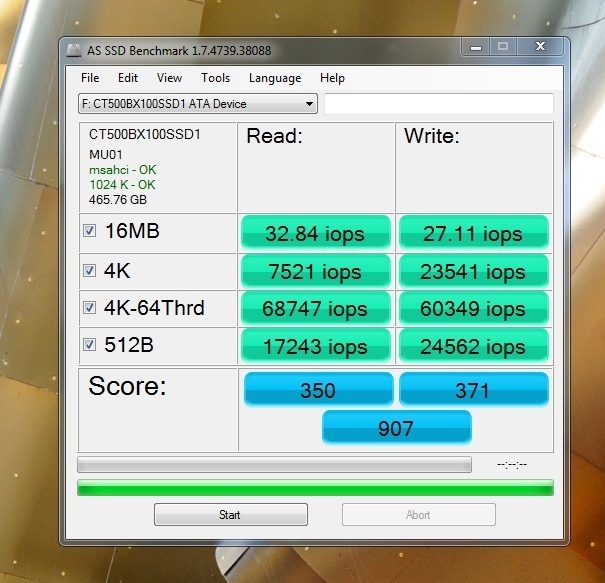

For this, two other SSD’s were chosen for comparison: the Crucial BX100 and the Samsung 850 Pro. No mechanical drives were chosen because we wanted a clean race. Why line up the Lamborghinis and then throw a Honda in? We also wanted to see if there was noticeable difference between drives of different price points. Of course, it’s important to note that continuous long-term use is usually where SSDs show their quality.

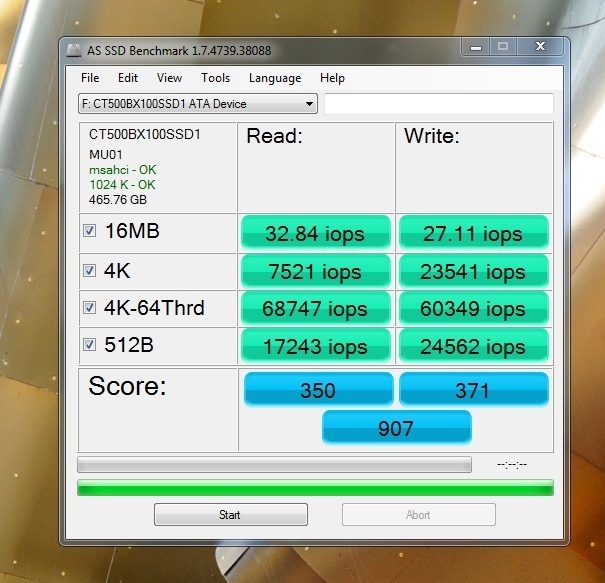

Here are the basic tests for both comparison drives.

The Crucial BX100 500G:

In iops:

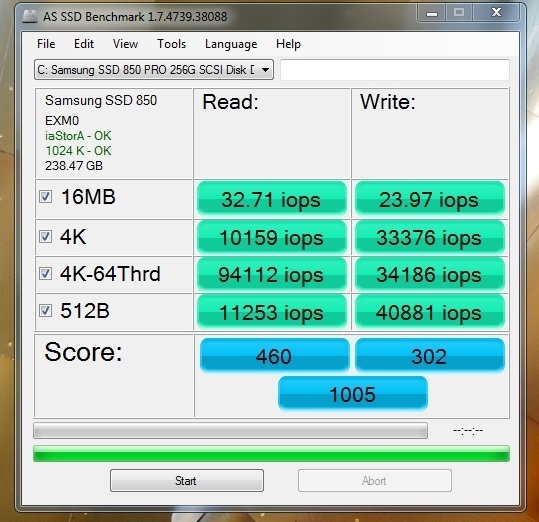

Samsung 850 Pro 256G:

In iops:

It’s worth mentioning that the Samsung drive is also the primary boot drive of the benchmarking system and posted these numbers at 93% capacity.

Speaking of the benchmarking system, here is the hardware the testing rig:

Motherboard: ASUS Rampage V Extreme

CPU: Intel Core i7-5960X rung at 3.0 GHz

Memory: GSkill DDR4, 16G

GPU: ASUS Direct CU II Radeon R9 290X

PSU: Rosewill Hercules 1600W

Your results will vary on any of the drives tested depending on your rig. I had the fortune of using the optimal configuration recommended by ASUS and Intel.

Gaming benchmarks

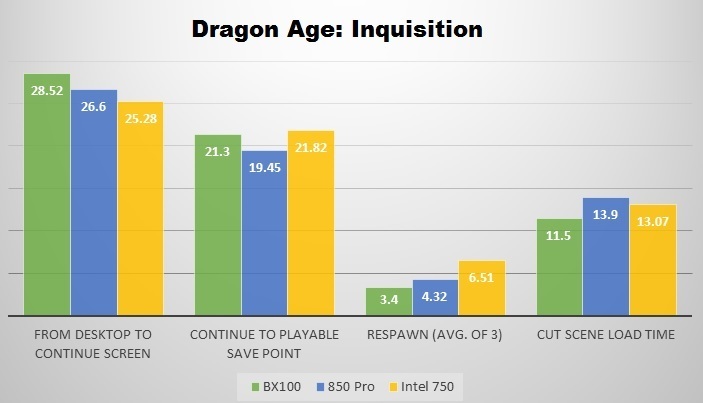

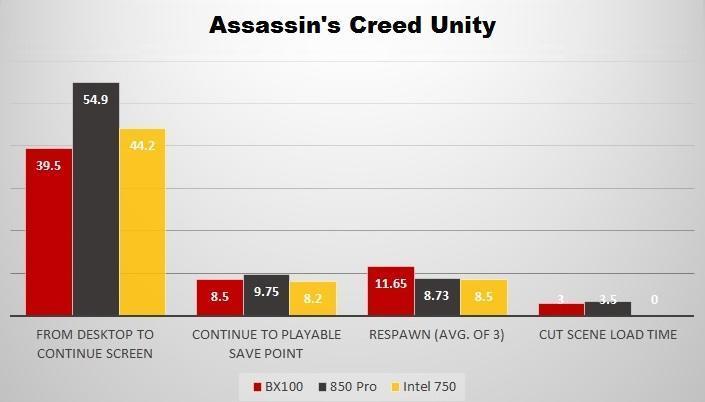

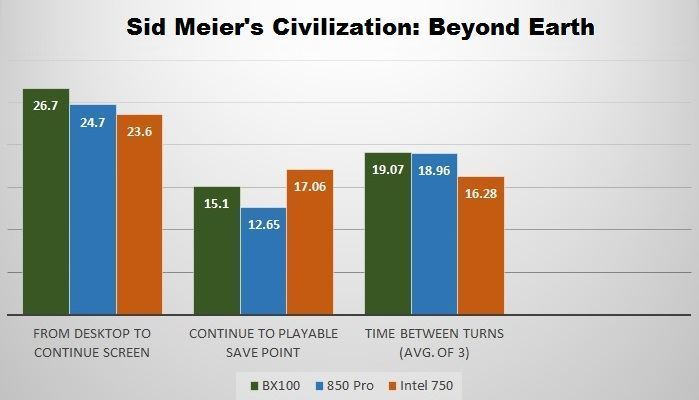

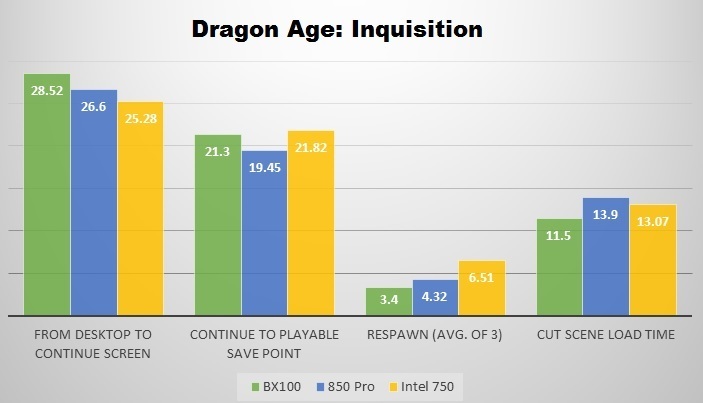

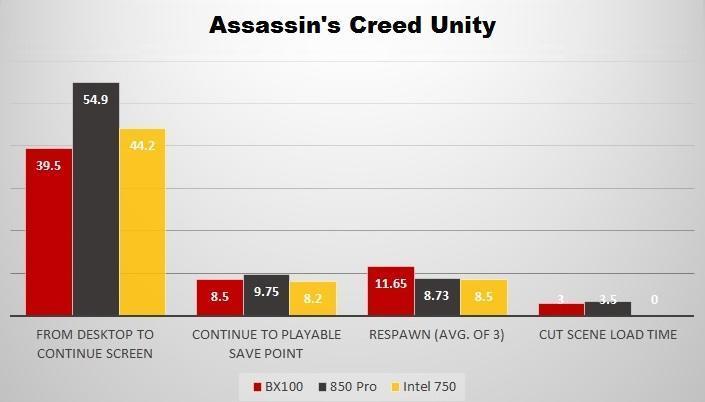

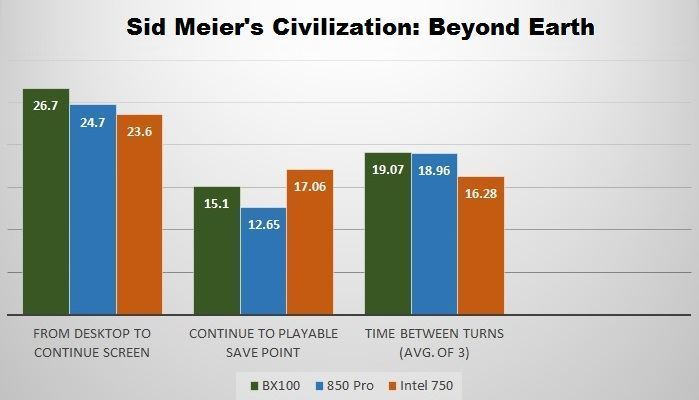

The real world use case chosen for these drives was game load times. The three games selected were Dragon Age: Inquisition, Assassin’s Creed Unity, and Sid Meier’s Civilization: Beyond Earth. The first two games were chosen because of the high volume of the game files and Civ: BE was chosen because any fan knows how load times can lag between turns, especially late in the game. We ran our test at turn 320.

The four data points collected for Dragon Age and Assassin’s Creed were:

1. From Desktop to Continue Screen.

2. From Continue to loading saved game.

3. Respawn time, from an average of three attempts.

4. Cut scene load time.

This last one was tricky with Assassin’s Creed, as the game was designed to move through cut scenes seamlessly. There is still some short waiting in certain areas, and we took the average of three load screens to get a fair number.

The three data points collected for Civ: BE were:

1. From Desktop to Continue.

2. From Continue to save game.

3. Time between turns, from an average of three attempts.

Here are the results. Time is in seconds, lower is better:

The results of these tests are interesting because it demonstrates that each of the drives tested have strong points and weak ones. Where the Intel drive excels is in-game loading. This was most noticeable in Assassin’s Creed. Though I cannot quantify it with data, that game felt much snappier when played on the Intel drive, and there was no perceivable time between gameplay and cut scenes.

One area left out of the formal graphs was the file transfers on and off of the Intel drive. As I moved the game files around from drive to drive I started recording transfer times. The 26.4G of Dragon Age took around 1:30 to go from the Samsung drive to the Intel drive, but only 45 seconds to go from the Intel drive to the BX100.

After gaming solely from SSDs, it will be a difficult transition from the test bench to my personal rig, which is no slouch. Nevertheless, like most of you I suspect, I will be saving up for some storage upgrades in the near future.

Written by Jennifer Rourke of GameCrate on April 24, 2015